Today we will be reviewing the Leadtek WinFast PX9800 GTX+. We will put this card against the older GeForce 9800 GTX Extrem OC from Foxconn. In addition, we will also run both cards in SLI and compare its performance against the GTX 260. Which card comes on the top? Read our review and find it out.

INTRODUCTION

When I reviewed the Foxconn 9800 GTX Extreme OC back in May of 2008, although it did impress us with its performance, it was hard to recommend it with the premium price that the manufacturers are charging. In fact, back then, I’d even go as far as to say that the card does not really deserve the GTX label, as that label is more reserved for the top of the line, best performance card. The performance of the GTX does not seem to carry the label too well when it is compared against the old 8800GTX.

Well, three months have passed and the new GTX 200 has been released to fill the top of the line market. And as we all know, with any new product release, the old high-end card’s price will certainly drop. And in the case of the 9800 GTX, we are not only seeing the card dropping to a meager $200, but we are also seeing a newly refreshed manufacturing process based on the first 55nm from NVIDIA, which NVIDIA gave to the new 9800 GTX+ label.

The new 55nm fabrication process not only guarantees a cooler running card (not that GeForce 9800 GTX runs hot), but NVIDIA has also decided to up the clockspeed of the card. In fact, the GeForce 9800 GTX+ is clocked at 740MHz core, 1100MHz memory (effective 2200MHz), and 1836MHz shader, as opposed to the 675MHz/1100MHz/1680MHz (core/memory/shader) found on the older GTX cards.

Like the older card, the newer card also supports NVIDIA’s CUDA, which allows the GPU to function as a CPU for computational purposes. The latest FAH has started to take advantage of this, and the preliminary results look promising. Although the newer GTX 200 series cards have a much more powerful CUDA, the fact that you get CUDA in the 9800 GTX/GTX+ means the card will be able to utilize such power when the application is able to use CUDA.

The GeForce 9800 GTX+ also supports NVIDIA’s Hybrid Power technology. With the right chipset, the HybridPower allows the system to completely shut down the GPU and use the onboard mGPU. This will greatly save on power consumption. Unfortunately, HybridPower only works with a handful of NVIDIA based chipsets.

The new GeForce 9800 GTX+ still maintains all the features and technology of the older card. It has 128 stream processors and supports DirectX 10, Shader Model 4.0, second generation PureVideo, three-way SLI, and 128-bit HDR with FSAA. In fact, you can even run both cards in SLI without any issue, and we do intend to actually put them in SLI and see the performance. Furthermore, it also supports PhysX and is HDCP capable.

We have previously looked at the GeForce 9800 GTX+, and today we will take a look at the Leadtek 9800 GTX+ and compare it against the Foxconn 9800 GTX Extreme OC to see the performance gain of the new card vs the old highly overclocked card. In addition, we will also compare the performance of the 9800 GTX in SLI to the GTX 260, and see whether it would be worth upgrading to a GTX 260 or to add another 9800 GTX in SLI.

LEADTEK

Founded in 1986, Leadtek is doing something slightly different than other manufacturers. Their company has invested tremendous resource in research and developement. In fact, they state that “Research and development has been the heart and soul of Leadtek corporate policy and vision from the start. Credence to this is born out in the fact that annually 30% of the employees are engaged in, and 5% of the revenue is invested in, R&D.”

Furthermore, the company focuses on their customers and provides high quality products with added value:

“Innovation and Quality ” are all and intrinsic part of our corporate policy. We have never failed to stress the importance of strong R&D capabilities if we are to continue to make high quality products with added value.

By doing so, our products will not only go on winning favorable reviews in the professional media and at exhibitions around the world but the respect and loyalty of the market.

For Leadtek, our customers really do come first and their satisfaction is paramount important to us.

LEADTEK WINFAST PX9800 GTX+

The Leadtek WinFast PX9800 GTX+ comes in a rather small box. The box has a glossy reflective surface, and that is why you see the rainbow stripes across the box. Important information can be found on both the front and back of the box, such as the package contents and connectors.

The card is protected by cutout Styrofoam padding, so it will definitely survive the rough shipping treatment the card may encounter with your delivery driver. As you can see, Leadtek even has separate compartments for the accessories, so they won’t bump into the card during shipping and cause damage.

Over the last few years, we have seen the graphic card’s bundle dwindle, especially in mainstream or budget cards. Luckily this is not the case with the this card, which comes with the following accessories:

- Two PCIE power adapter

- One DVI to HDMI adapter

- One DVI to VGA adapter

- One HDTV to composite adapter

- HDMI pass through audio cable

- A driver disk

- A quick installation guide

- A full version of NeverWinter Nights 2

As you can see, not only is this card bundled with all the necessary accessories, it also comes with a full version of the NeverWinter Nights 2. Although this game was released back in 2006, it is still a nice game to have, since I didn’t already own it.

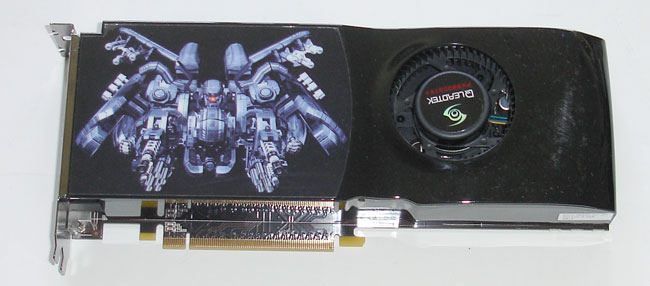

The exterior of the Leadtek’s 9800 GTX+ looks exactly like the old 65nm version of the GeForce 9800 GTX. In fact, you cannot really tell the difference simply by the exterior. The same curved fan shroud that is used with the older 9800 GTX is still being used with the 9800 GTX+. Leadtek puts a cool looking robot image sticker on the front of the fan shroud.

Nothing has changed on the side of the card in comparison to the older 9800 GTX. There are two gold fingers for Tri-SLI and the HDMI audio connector; in addition, there are two six pin PCIE power connectors.

As far as the video connectors go, you still get two DVIs and an HDTV connector. Nothing special to see here.

One thing that I would like to mention is Leadtek’s warranty period. Leadtek warrants their graphics cards for three years from the original date of purchase. Although this is pretty standard in the industry, and other manufacturers such as Asus and Gigabyte also offer such warranty lengths, it is a bit shorter than some of the bigger manufacturers in graphics cards – such as EVGA, BFG or XFX, which warrant their cards for 10 years or an entire lifetime. Three years should definitely be long enough for a graphics card, but it would be nice to see a longer warranty.

FEATURES AND SPECIFICATION

| Foxconn GeForce 9800 GTX Extreme OC | |

|

Specification |

|

| Fabrication Process | 55nm (G92) |

| Core Clock (Including dispatch, texture units, and ROP units) |

740 MHz |

| Shader Clock (Processor Cores) | 1836 MHz |

| Processor Cores | 128 |

| Memory Clock / Data Rate | 1100 MHz / 2200 MHz |

| Memory Interface | 256 bit |

| Memory Size | 512 MB |

| ROPs | 16 |

| Texture Filtering Units | 64 |

| HDCP Support | Yes |

| HDMI Support | Yes (Using DVI to HDMI adaptor) |

| Connectors | 2 x Dual-Link DVI-I 1 x 7-pin HDTV Out |

| RAMDAC’s | 400 MHz |

| BUS Technology | PCI-Express 2.0 |

| Form Factor | Dual Slot |

| Power Connectors | 2 x 6-pin |

| Dimensions | 270mm x 100mm x 32mm (L x H x D) 10.5in x 3.93in x 1.26in |

Features

- PCI Express 2.0 GPU Provides Support for Next Generation PC Platforms

- GPU/Memory Clock at 738/2200 MHz!!

- HDCP capable

- 512MB, 256-bit memory interface for smooth, realistic gaming experiences at Ultra-High Resolutions /AA/AF gaming

- Support Dual Dual-Link DVI with awe-inspiring 2560×1600 resolution

- The Ultimate Blu-ray and HD DVD Movie Experience on a Gaming PC

- Smoothly playback H.264, MPEG-2, VC-1 and WMV video—including WMV HD

- Industry leading 3-way NVIDIA SLI technology offers amazing performance

- NVIDIA® unified architecture

- GigaThread™ Technology

- High-Speed GDDR3 Memory on Board

- NVIDIA PhysX™ -Ready

- Dual Dual-Link DVI

- Dual 400MHz RAMDACs

- 3-way NVIDIA SLI technology

- HDCP Capable

- NVIDIA® nView® multi-display technology

- NVIDIA® Lumenex™ Engine

- NVIDIA® Quantum Effects™ Technology

- Microsoft® DirectX® 10 Shader Model 4.0 Support

- Dual-stream Hardware Acceleration

- High dynamic-range (HDR) Rendering Support

- NVIDIA® PureVideo ™ HD technology

- HybridPower Technology support

- Integrated HDTV encoder

- OpenGL® 2.1 Optimizations and Support

- NVIDIA CUDA™ Technology support

TEST SETUP

|

Test Platform |

|

|

Processor |

Intel Xeon X3320 OC to 2.8GHz |

|

Motherboard |

EVGA 790i Ultra (BIOS P32) |

|

Memory |

2GB of OCZ Gold Edition PC3-10666 (9-9-9-26-1T) |

|

Drive(s) |

Samsung HD501J (500GB/7200rpm/16MB cache) |

|

Graphics |

|

|

Cooling |

Thermalright SI-128 with Scythe S-FLEX SFF21F |

|

Power Supply |

Enermax Galaxy 850W |

|

Display |

Gateway FPD2485W |

|

Case |

None |

|

Operating System |

Windows Vista Ultimate 64-bit, SP1 |

From the top: EVGA GTX 260 SSC, Leadtek WinFast 9800 GTX+, and Foxconn GeForce 9800 GTX Extrem OC. All three cards use two six pin PCIE power adapters and have the same length and size. Let’s see who can perform the best.

Who can tell me which one is GTX and which one is GTX+? (Just so you know, the top one is the GTX+ and the bottom is GTX).

| Testing Cards’ Specification | |||

| Leadtek 9800 GTX+ | Foxconn 9800 GTX Extrme OC | EVGA GTX260 SSC | |

| Fabrication Process | 55nm | 65nm | 65nm |

|

Core Clock Rate |

740 MHz | 675 MHz | 626 MHz |

| SP Clock Rate | 1,836 MHz | 1,675 MHz | 1,350 MHz |

| Streaming Processors | 128 | 128 | 192 |

| Memory Type | GDDR3 | GDDR3 | GDDR3 |

| Memory Clock | 1,100 MHz (2,200 MHz) |

1,100 MHz (2,200 MHz) |

1,053 MHz (2,106 MHz) |

| Memory Interface | 256-bit | 256-bit | 448-bit |

| Memory Bandwidth | 70.4 GB/s | 70.4 GB/s | 117.9 GB/s |

| Memory Size | 512 MB | 512 MB | 896 MB |

| ROPs | 16 | 16 | 28 |

| Texture Filtering Units | 64 | 64 | 64 |

| Texture Filtering Rate | 47.4 GigaTexels/sec | 43.2 GigaTexels/sec | 43.8 GigaTexels/sec |

| RAMDACs | 400 MHz | 400 MHz | 400 MHz |

| Bus Type | PCI-E 2.0 | PCI-E 2.0 | PCI-E 2.0 |

| Power Connectors | 2 x 6-pin | 2 x 6-pin | 2 x 6-pin |

SLI Setup

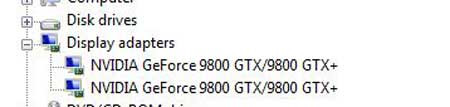

I want to take a moment and talk about the SLI setup of the GeForce 9800 GTX+/GTX. When I originally installed both graphics cards in the computer and connected the bridge to the gold connector that is closer to the back of the card, I was not able to run the cards in SLI. In Windows, one of the two cards will be recognized as an 8800 GT. Even when I enabled the SLI setting under the NVIDIA control panel, the system will not recognize the cards correctly.

When I switched the SLI bridge to the one that is farther from the back of the card, I was able to get the cards recognized properly in the Device Manager, and was able to enable SLI under the NVIDIA control panel. As you can see, the Windows Device Manager detects both cards as a GeForce 9800 GTX/9800 GTX+ combo. It is a bit puzzling to me why it would matter which connector you used, but just be warned if you do decide to pair two cards in SLI, be sure to use the connector that is farther from the back of the card. This raises an interesting question as to whether or not you can pair three 9800 GTX/GTX+ in tri-SLI. If after connecting one of the two connectors it will not run the SLI, I am curious if the card is even capable of running three cards.

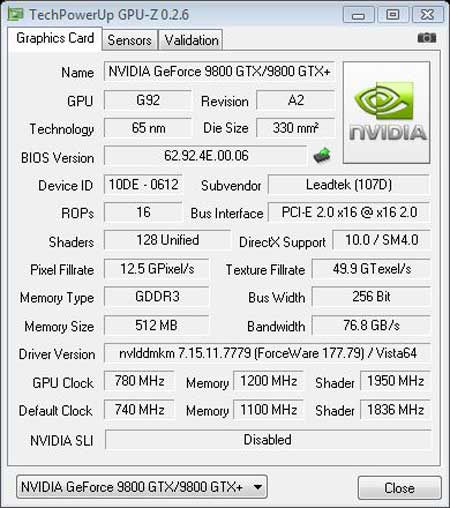

CPU-Z still recognizes the 55 nm version as 65 nm.

RESULTS

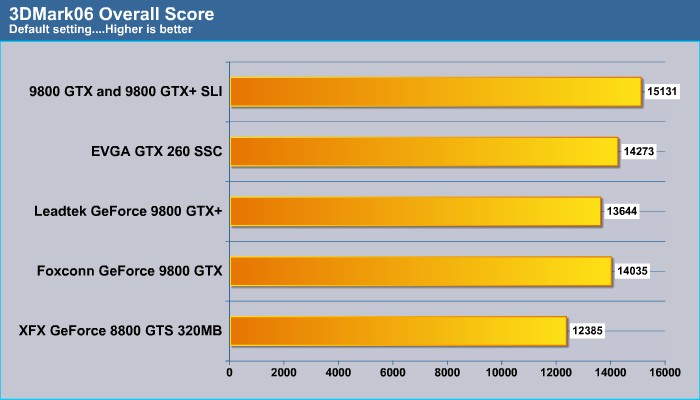

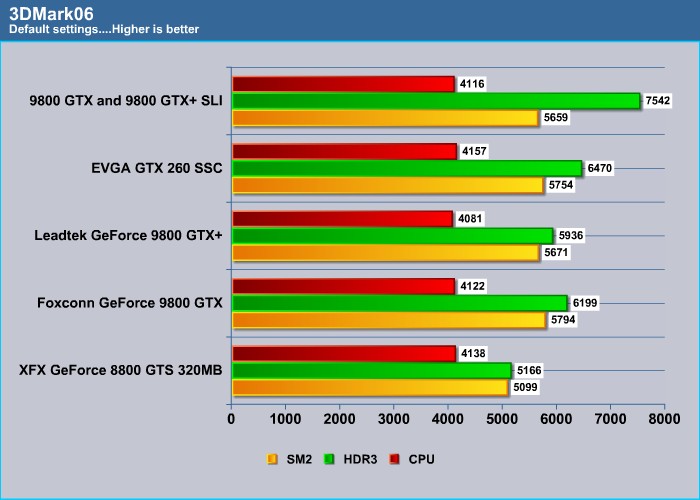

3DMark06

3DMark06 yields a slightly better result for the Foxconn card. It is somewhat expected, as the clock speed on the Foxconn card is higher than the Leadtek card. Both 9800 GTX/+ cards lag behind the GTX 260. The 8800 GTX 320MB gives a decent result, considering it’s a year and half old.

3DMark06 is getting a bit old now, as it is barely able to show the performance gain of the SLI cards.

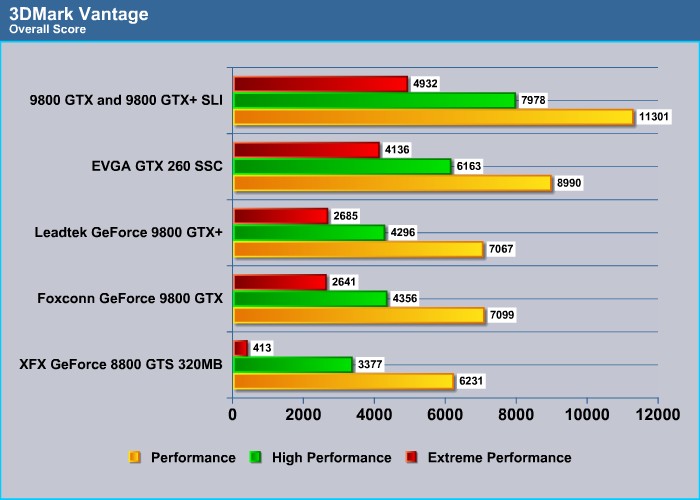

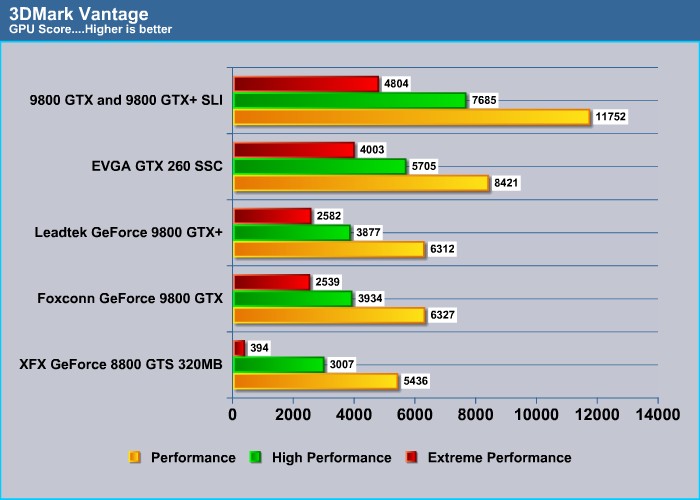

3DMark Vantage

FutureMark’s 3DMark Vantage is a much better assessment for the new graphic card’s performance. Once again, the highly overclocked Foxconn edges out the stock GeForce 9800 GTX. Although, it is worth noticing that at Extreme Performance, the Leadtek GeForce 9800 GTX+ actually is able to edge out the GeForce 9800 GTX. Despite the same architecture and technology, it seems that the new 55 nm card is a bit more efficient with higher graphical effects than the older 65 nm card.

Vantage shows the performance of the cards in SLI at approximately 60% higher than the single card, and with GeForce 9800 GTX/+ running in SLI, they are able to perform approximately 30% better than a single GTX 260. However, the performance gain seems to have reduced to 20% at a higher resolution and graphical settings.

The old GeForce 8600 GTS is starting to show its age now as we crank up the eye-candies. The combination of old technology and puny 320MB onboard RAM are affecting its performance at extreme setting.

RESULTS

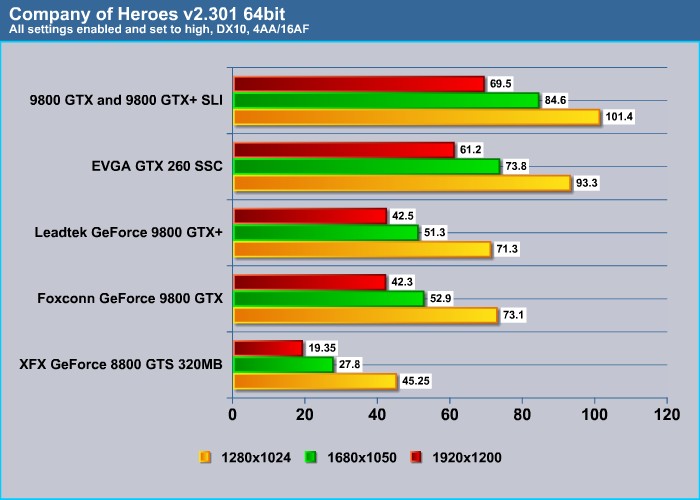

Company of Heroes

No surprise here. Just like what we have observed in the Vantage. Once again, the Foxconn GeForce 9800 GTX Extreme OC is able to offer a tad better performance at lower resolution, but at 1920×1200, the Leadtek GeForce 9800 GTX+ has a tiny edge. Compare the performance of the GTX 260 and 9800 GTX/+ in SLI, and we can see that the 9800 GTX/+ in SLI is clearly the winner here. It is able to offer approximately 10% more performance compared to the GTX 260.

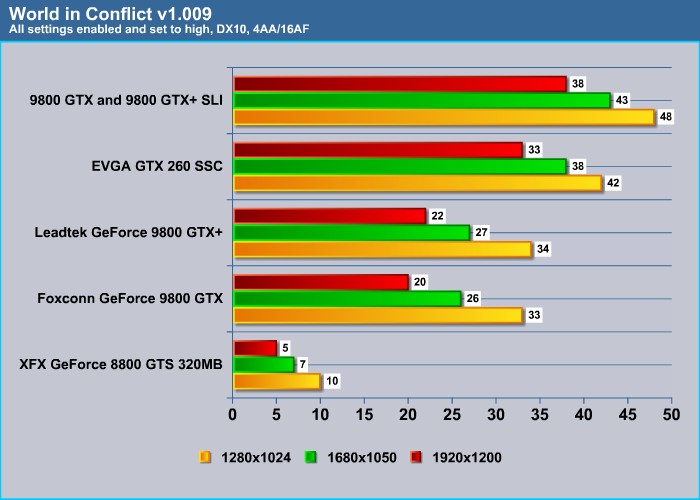

World in Conflict

Quite interesting result from WiC. Here, the higher clock speed Foxconn card actually performs slightly worse than the Leadtek 9800 GTX+ in every resolution tested. However, neither cards are able to offer a good 30 fps at 1920×1200 resolution. The GeForce GTX 260, on the other hand, is able to offer an average fps of over 30 even at 1920×1200. The GeForce 8800 GTS is not even able to break 10 fps even at low resolution of 1280×1024. It’s time to throw this card away I would say, unless you are only playing games without all the eye-candies.

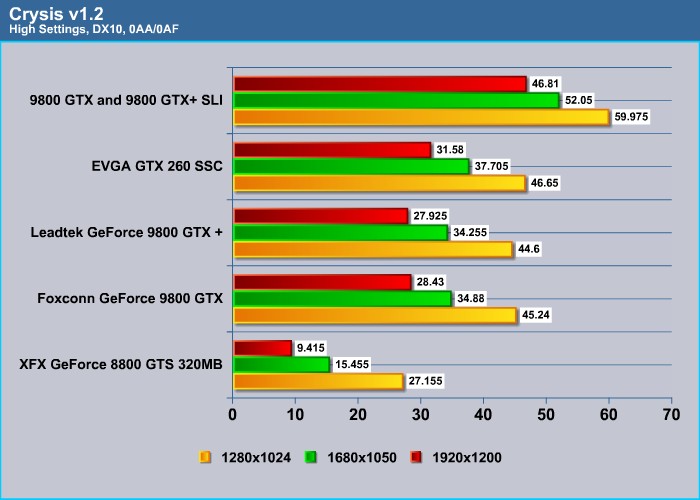

Crysis

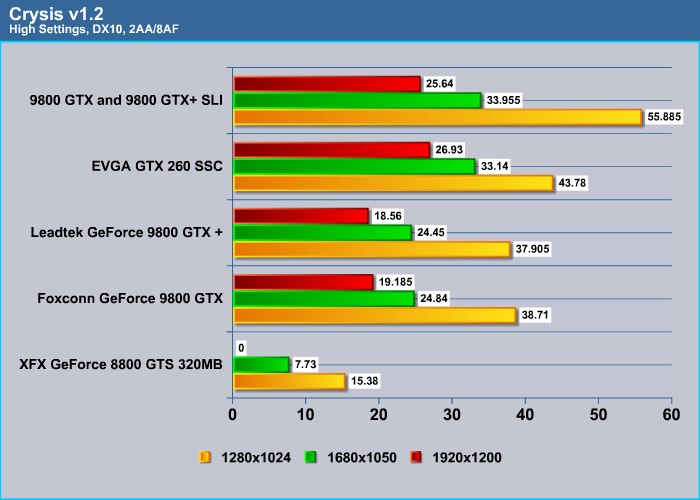

No surprise here. When no AA or AF is enabled, the GeForce 9800 GTX is just a tad shy of the magical 30 average fps at 1920×1200, while the GTX 260 is able to break the 30 fps barrier. Something interesting to see here as well: the new GTX 260’s architecture allows it to offer a better performance gain at higher resolution than at lower resolution. We see only about a 4% gain over the GeForce 9800 GTX/+ at 1280×1024 resolution, but approximately a 10% gain at 1680×1050 and 1920×1200. Thus, if you have a large screen, going for the GTX 260 would definitely be a wise choice.

Crysis is one of the few games that optimizes for SLI quite well. We see almost an 80% performance gain with two 9800 cards over a single card. Also, the 9800 GTX/+ in SLI is also able to offer close to a 50% performance gain over the GTX 260.

Quite interesting result when we look at the 2xAA/8xAF data. Here we see the GTX 260 is actually able to edge out the two 9800 GTXs in SLI at resolutions higher than 1680×1050. The GTX 260 is definitely a much better card when the graphical demand is high, and would definitely be a wiser investment for future games.

RESULTS

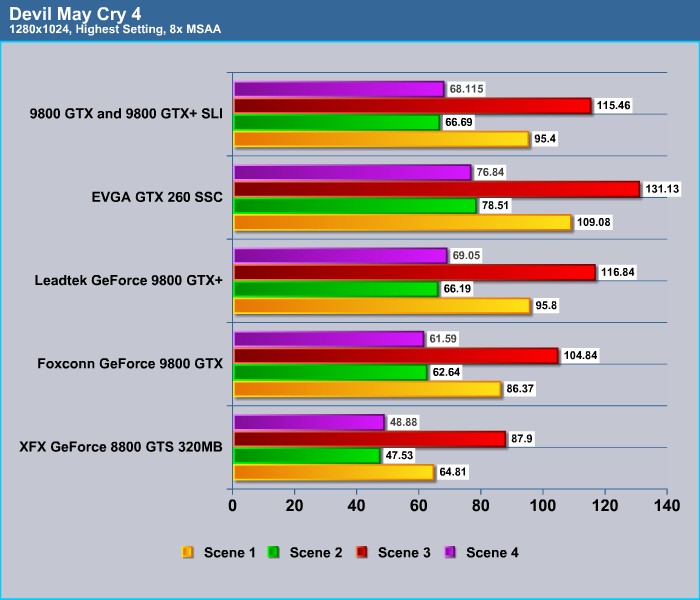

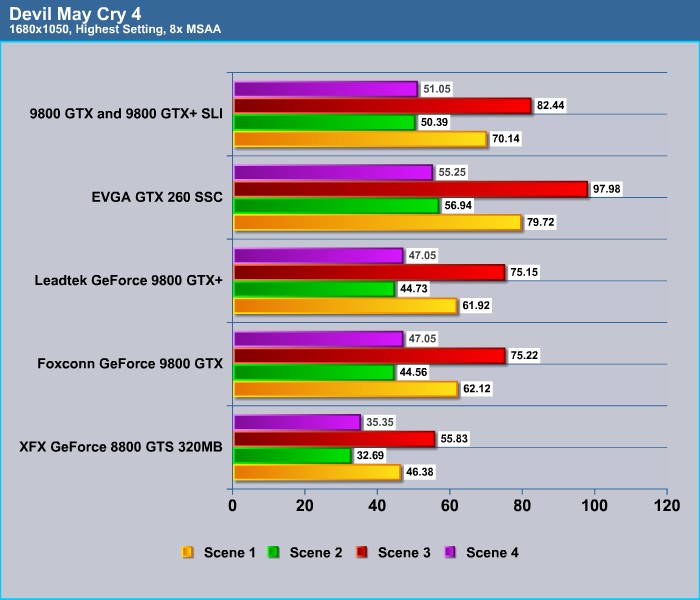

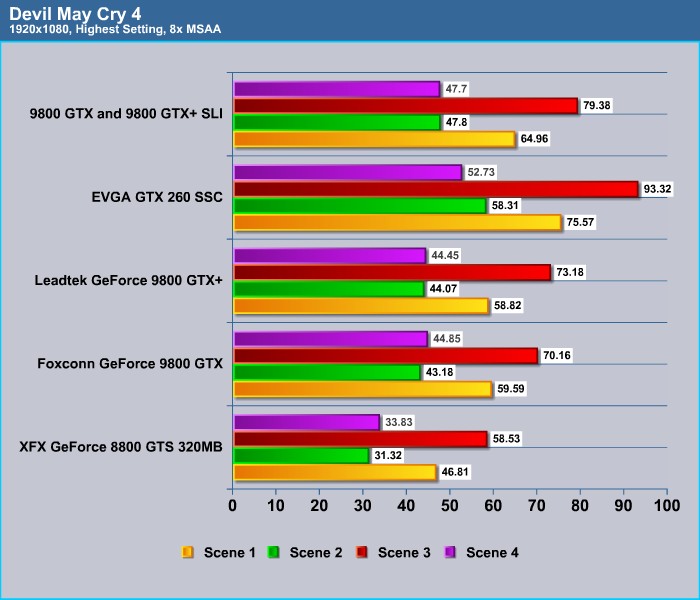

Devil May Cry

Devil May Cry is one of the games that does not really take the advantage of SLI. We can see there is virtually no performance gain whatsoever with SLI. The result from this benchmark shows that the Leadtek 9800 GTX+ actually has a more noticeable performance gain over the Foxconn card. All of the cards are able to offer a good playable 30 fps, even at 1920×1200 with 8x MSAA.

OVERCLOCKING

To find the highest stable overclock, I used NVIDA’s own System Tool software. I tested each setting with ATITool to scan for artifacts for at least one minute to ensure that there are no artifacts. After, I performed a quick run of 3DMark06 to be sure that the card is able to run stable at the given setting.

I actually had high hopes for this card but the result is a bit disappointing. I can only overclock the GPU core 40 MHz to 780 MHz, the memory to 1200 MHz (100 MHz more than the default), and the shader to 1950 MHz (114 MHz from the default). I originally thought the die-shrink and the cooler card would provide an excellent overclocking result.

It seems to me that the result we obtained is most likely going to be what we are able to milk out of the G92 design since the 9800 GTX that we have is also clocked at similar speed, and most overclocked GTX+ also are clocked similarly as well.

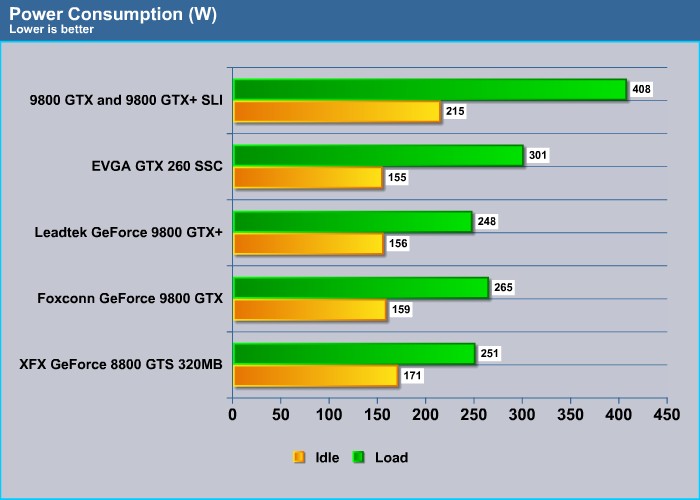

POWER CONSUMPTION

At idle, the Leadtek 9800 GTX+ consumes 156W of power, and at load it consumes 248W of power. Although the power consumption is lower than the Foxconn card, which is using the 65nm fabrication process, we have to keep in mind that this card’s clock speed is higher than the reference card. Thus, it will consume more power. However, with both 9800 GTX cards clocked at similar clock speeds, we can use the numbers to infer the power saved from the 55 nm fabrication process. Approximately, the new 9800 GTX+ is able to save 17W from the 9800 GTX, if both cards are clocked at the same level.

With two 9800 GTXs in SLI, the overall system power consumption rises to 215W under idle and a whopping 408W at load. Despite less power consumption at idle, the GeForce GTX 260 consumes 301W under load. This is 50W more than the 9800 GTX. Looking at the power consumption and the performance, it may be a better choice to go with the GTX 260 than SLI cards.

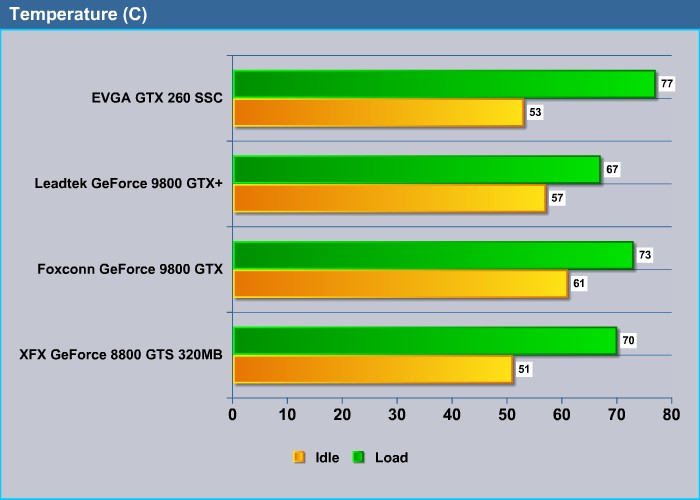

TEMPERATURE

Clearly, the advantage of going to the 55 nm fabrication process can be seen with the temperature of the cards. Here we see that Leadtek’s card is able to run at an idle temperature of 57°C compared to the 61°C of the 9800 GTX. The card is also running at a cool 67°C under load compared to the 73°C for the 9800 GTX. The GTX 260 shows a good temperature result idling at 53°C, but under load it reaches 77°C.

As for the noise level, all the cards we have tested today run fairly quiet. The GeForce 9800 GTX+ does not run any louder or quieter than the old GeForce 9800 GTX, as the fan profile seems to be the same. However, because it runs cooler than the 9800 GTX, the fan may not throttle as much as the older card.

CONCLUSION

Based on our benchmarks, we can predict that, for most games at resolutions of 1680×1050 or lower, going for the SLI of two 9800 GTXs seems to be a good idea if you have already got one 9800 GTX. However, at higher resolutions of 1920×1200 and beyond, and when all the graphical effects are turned up to the “High” level, the GTX 260 is actually able to offer a better result than 9800 GTXs in SLI, as it is demonstrated with the Crysis benchmark.

One of the things that is nice about the GeForce 9800 GTX+ is that it is capable of running SLI with the older 9800 GTX, and as our benchmarks have shown, there is no performance penalty at all pairing up the cards. In fact, our benchmarks have also shown that the SLI performance gain of the 9800 GTX is actually quite large if the game is able to take the advantage of it. On the other hand, the biggest disadvantages of going SLI would be the power consumption. As our tests show, two 9800 GTX cards in SLI are consuming 100W more than a single GTX 260. This alone can deter users from choosing the 9800 GTX SLI over the GTX 260.

Our test sample Leadtek WinFast PX9800 GTX+ that we have today has shown that the die-shrink of the GeForce 9800 GTX is something to be pleased about. It reduces the power consumption and lowers the temperature. What is even more surprising is that the GeForce 9800 GTX+ is actually able to offer a tad better result at high graphical settings than the higher clocked Foxconn GeForce 9800 GTX. Combining this with a good bundle of cables and the NeverWinter Nights 2 game make this card a good choice for those who are seeking to buy a GeForce 9800 GTX+.

Currently, the GeForce 9800 GTX+ can be purchased at around $200 while the GeFroce GTX 260 can be purchased for $50 more. The purchasing decision is a bit difficult given the performance difference of the two cards. I would say, if you still have the old G80 card, it would be nice to upgrade to the GTX 260 if your wallet allows. If not, go for the GeForce 9800 GTX+, as it’s not a bad choice, and that $50 saved can be put into other components. However, if you have recently purchased the 9800 GTX, it may be worth it to upgrade to the GeForce 9800 GTX+ in SLI if the system supports it.

Leadtek WinFast PX9800 GTX+ will receive a score of 8.5 (very good) out of 10.

Pros:

+ Runs cool

+ Able to run SLI with GeForce 9800 GTX

+ Out-performs GeForce 9800 GTX, especially at high graphical settings

+ Good bundle

+ Decent overclocking result

+ Three year warranty

Cons:

– May be too large for some cases/systems

– Availability

– GTX 260 has a slightly better performance, especially at higher graphical settings (if you do not mind paying $50 extra)

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996