While I can easily say both X1600 XT and X1300 from PowerColor are good products, I’m a bit famished. The performance of X1600 XT could be better yet I understand this might come in time with the new drivers. This X1600 as well as X1300 have it all; Avivo/HDTV along with H.264 decoding and transcoding, new Ultra-Threaded Shader Engine, Ring Bus memory controller (XT only) and most importantly Shader Model 3.0 support. RADEON X1600 doesn’t stay way behind the pack. As far as I’m concerned it’s performing properly for $200 bucks, but lacks that true 256-bit bus. The next gem, PowerColor X1300 is aimed at consumers with less money on hand or simply those who need advanced DirectX 9 support at a reasonable price / performance ratio.

Introduction

*Important update*

It has come to my attention that Tul does not carry a regular X1300 PRO. Instead it has its Bravo model with custom cooling device. The PRO model with golden heatsink you see on their product page does NOT exist.

What Tul is doing is they are providing customers with an OC BIOS. When you flash your regular PowerColor X1300 (400 MHz / 250 MHz) it will be clocked to PRO speeds (600 MHz / 400 MHz). This was exactly the card I have gotten — a non PRO flashed with PRO clockrates. What does that mean? Both PRO and non PRO models are equipped with the same type of memory, capable of 800 MHz DDR speeds. does NOT exist. Since I wasn’t aware of this fact, I assumed it was a PRO model based on the specifications on their website. The card I have received is indeed a retail product.

PowerColor X1300 to X1300 PRO BIOS mod (rar, 120KB)

X1300’s (R51B-ND3) default clocks are 450 MHz / 250 MHz. After the flash they will match X1300 PRO speeds: 600 MHz / 400 MHz!

Instructions (use the included ATIFLASH utility):

Backup your BIOS: atiflash -s 0 <BIOS name>.sb -f (ex. backup.sb)

Reflash BIOS: atiflash -p 0 x1300oc.sb -f (x1300oc.sb is the new BIOS)

Reboot and enjoy your brand new PowerColor X1300 PRO 🙂

About a month ago I’ve reviewed a flagship card from PowerColor: X1800 XT with all the bells and whistles; that is Avivo, new memory bus and SM 3.0 support. Based on the new R520 core, ATI tried to climb up and reach G70 performance. In some cases it succeeded, but whether you like it or not it’s losing the battle in a lot of scenarios. Comparing it to last year’s X800 generation it does sport significant performance boost over the old high-end card however.

Today I have less expensive parts to test for both middle and low-end sectors of the market. They are PowerColor X1600 XT and X1300. The first card is based around RV530 core. There should be a lot of different SKUs mainly because ATI allows for handful of configurations ie. memory size and type. As far as memory bus is concerned, it features two 128-bit rings which is essentially a 128-bit architecture (ATI calls it 256, but that’s obviously wrong). It comes with 4 pixel pipelines, 12 pixel shader units and 5 vertex pipelines — not a standard 1:1 configuration. The X1300 sample is equipped with 4 pixel pipelines, 4 pixel shader units and 2 vertex pipelines (1 to 1 ratio, just like R520). As with X1600 XT it’s also a 128-bit offering and should be available in many different configurations.

Should you require more detailed information on X1K please revisit our article: The X1000 series – ATI goes SM3.0

PowerColor is a consumer brand focused on providing cutting-edge graphics card products to retail customers. Our goal for the Tul brand is to be the industry’s number one provider of technology product solutions. Our goal for the PowerColor brand is to be the world’s number one brand of graphics cards. PowerColor is in effect owned by Tul Corporation, however the brands are operated independently of each other.

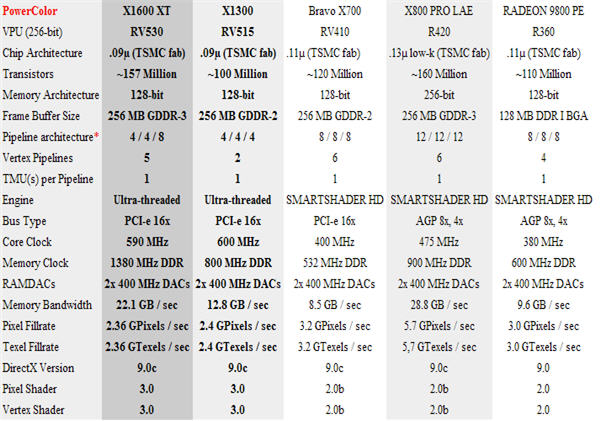

VPU Specifications

As with every new generation of GPUs the feature set becomes larger and more complex. The newly introduced X1K family of products is exactly what we have here. Most importantly, the X1000 line now sports Shader Model 3.0 which has been available from NVIDIA for over a year now. The X1600 GPU is similarly designed as X1800, but with few important differences. It uses 128-bit memory bus and features different pipeline architecture. The card boasts 4 pipelines (known as ROPs, Render Output Pipelines). Do not get confused (as I did), I’ll explain.

ROPs are actual pixel pipelines. Shader pipelines or pixel shaders consist of ROPs and fragment pipelines. With X1600, each ROP consists of 3 pixel shaders (giving us 12 PS units) and 8 Z-samples per clock. In other words X1600’s pipeline configuration is as follows:

- 12 PS units

- 4 ROPs

- 4 TMUs (1 TMU per pipeline)

- 8 Z-samples per clock

The biggest of all changes is the introduction of what ATi calls Ultra-Threading Dispatch Processor and Ring Bus (old SMARTSHADER). Without going into much detail here, the dispatch processor (as the name implies) routes or relegates the workload to four pixel shader cores (also known as Quads). Each quad consists of four mini-pixel processors. The process between them is called threading and means:

- Large Scale Multi-threading

- Hundreds of simultaneous threads across multiple cores

- Each thread can perform up to 6 different shader instructions on 4 pixels per clock cycle

- Small Thread sizes

- 16 pixels per thread in Radeon X1800

- Fine-grain parallelism

- Fast Branch Execution

- Dedicated units handle flow control with no ALU overhead

- Large Multi-Ported Register arrays

- Enables fast thread switching

Now the Ring Bus technology. This isn’t Marry-go-around or anything like that — well sort of. The bus itself consists of two 128-bit rings which run in the opposite direction. This way routing latencies are reduced to a bare minimum and clockrate scaling is far more efficient. Among other things we have:

- New cache design – fully associative for more optimal performance

- Improved Hyper-Z – better compression and hidden surface removal

- Programmable arbitration logic – maximizes memory efficiency and can be upgraded via software

Important note: X1300 GPU does not carry a Ring Bus! It uses different technique to improve memory allocation.

* Pipeline architecture: Textures / Pixels / Z-Samples per clock)

X1600 Specifications

- Features

-

- 157 million transistors on 90nm fabrication process

- Dual-link DVI

- Twelve pixel shader processors

- Five vertex shader processors

- 128-bit 4-channel DDR/DDR2/GDDR3 memory interface

- Native PCI Express x16 bus interface

- AGP 8x configurations also supported with AGP-PCI-E external bridge chip

- Dynamic Voltage Control

- Ring Bus Memory Controller

-

- 256-bit internal ring bus for memory reads

- Programmable intelligent arbitration logic

- Fully associative texture, color, and Z/stencil cache designs

- Hierarchical Z-buffer with Early Z test

- Lossless Z Compression (up to 48:1)

- Fast Z-Buffer Clear

- Z/stencil cache optimized for real-time shadow rendering

- Ultra-Threaded Shader Engine

-

- Support for Microsoft® DirectX® 9.0 Shader Model 3.0 programmable vertex and pixel shaders in hardware

- Full speed 128-bit floating point processing for all shader operations

- Up to 128 simultaneous pixel threads

- Dedicated branch execution units for high performance dynamic branching and flow control

- Dedicated texture address units for improved efficiency

- 3Dc+ texture compression

- High quality 4:1 compression for normal maps and two-channel data formats

- High quality 2:1 compression for luminance maps and single-channel data formats

- Multiple Render Target (MRT) support

- Render to vertex buffer support

- Complete feature set also supported in OpenGL® 2.0

- Advanced Image Quality Features

-

- 64-bit floating point HDR rendering supported throughout the pipeline

- Includes support for blending and multi-sample anti-aliasing

- 32-bit integer HDR (10:10:10:2) format supported throughout the pipeline

- Includes support for blending and multi-sample anti-aliasing

- 2x/4x/6x Anti-Aliasing modes

- Multi-sample algorithm with gamma correction, programmable sparse sample patterns, and centroid sampling

- New Adaptive Anti-Aliasing feature with Performance and Quality modes

- Temporal Anti-Aliasing mode

- Lossless Color Compression (up to 6:1) at all resolutions, including widescreen HDTV resolutions

- 2x/4x/8x/16x Anisotropic Filtering modes

- Up to 128-tap texture filtering

- Adaptive algorithm with Performance and Quality options

- High resolution texture support (up to 4k x 4k)

- 64-bit floating point HDR rendering supported throughout the pipeline

- Avivo™ Video and Display Platform

-

- High performance programmable video processor

- Accelerated MPEG-2, MPEG-4, DivX, WMV9, VC-1, and H.264 decoding and transcoding

- DXVA support

- De-blocking and noise reduction filtering

- Motion compensation, IDCT, DCT and color space conversion

- Vector adaptive per-pixel de-interlacing

- 3:2 pulldown (frame rate conversion)

- Seamless integration of pixel shaders with video in real time

- HDR tone mapping acceleration

- Maps any input format to 10 bit per channel output

- Flexible display support

- DVI 1.0 compliant / HDMI interoperable and HDCP ready

- Dual integrated 10 bit per channel 400 MHz DACs

- 16 bit per channel floating point HDR and 10 bit per channel DVI output

- Programmable piecewise linear gamma correction, color correction, and color space conversion (10 bits per color)

- Complete, independent color controls and video overlays for each display

- High quality pre- and post-scaling engines, with underscan support for all outputs

- Content-adaptive de-flicker filtering for interlaced displays

- Xilleon™ TV encoder for high quality analog output

- YPrPb component output for direct drive of HDTV displays*

- Spatial/temporal dithering enables 10-bit color quality on 8-bit and 6-bit displays

- Fast, glitch-free mode switching

- VGA mode support on all outputs

- Drive two displays simultaneously with independent resolutions and refresh rates

- Compatible with ATI TV/Video encoder products, including Theater 550

- High performance programmable video processor

- CrossFire™

-

- Multi-GPU technology

- Four modes of operation:

- Alternate Frame Rendering (maximum performance)

- Supertiling (optimal load-balancing)

- Scissor (compatibility)

- Super AA 8x/10x/12x/14x (maximum image quality)

X1300 Specifications

- Features

-

- 105 million transistors on 90nm fabrication process

- Four pixel shader processors

- Two vertex shader processors

- 128-bit 4-channel DDR/DDR2/GDDR3 memory interface

- 32-bit/1-channel, 64-bit/2-channel, and 128-bit/4-channel configurations

- Native PCI Express x16 bus interface

- AGP 8x configurations also supported with external bridge chip

- Dynamic Voltage Control

- High Performance Memory Controller

-

- Fully associative texture, color, and Z/stencil cache designs

- Hierarchical Z-buffer with Early Z test

- Lossless Z Compression (up to 48:1)

- Fast Z-Buffer Clear

- Z/stencil xache optimized for real-time shadow rendering

- Ultra-Threaded Shader Engine

-

- Support for Microsoft® DirectX® 9.0 Shader Model 3.0 programmable vertex and pixel shaders in hardware

- Full speed 128-bit floating point processing for all shader operations

- Up to 128 simultaneous pixel threads

- Dedicated branch execution units for high performance dynamic branching and flow control

- Dedicated texture address units for improved efficiency

- 3Dc+ texture compression

- High quality 4:1 compression for normal maps and two-channel data formats

- High quality 2:1 compression for luminance maps and single-channel data formats

- Multiple Render Target (MRT) support

- Render to vertex buffer support

- Complete feature set also supported in OpenGL® 2.0

- Advanced Image Quality Features

-

- 64-bit floating point HDR rendering supported throughout the pipeline

- Includes support for blending and multi-sample anti-aliasing

- 32-bit integer HDR (10:10:10:2) format supported throughout the pipeline

- Includes support for blending and multi-sample anti-aliasing

- 2x/4x/6x Anti-Aliasing modes

- Multi-sample algorithm with gamma correction, programmable sparse sample patterns, and centroid sampling

- New Adaptive Anti-Aliasing feature with Performance and Quality modes

- Temporal Anti-Aliasing mode

- Lossless Color Compression (up to 6:1) at all resolutions, including widescreen HDTV resolutions

- 2x/4x/8x/16x Anisotropic Filtering modes

- Up to 128-tap texture filtering

- Adaptive algorithm with Performance and Quality options

- High resolution texture support (up to 4k x 4k)

- 64-bit floating point HDR rendering supported throughout the pipeline

- Avivo™ Video and Display Platform

-

- High performance programmable video processor

- Accelerated MPEG-2, MPEG-4, DivX, WMV9, VC-1, and H.264 decoding and transcoding

- DXVA support

- De-blocking and noise reduction filtering

- Motion compensation, IDCT, DCT and color space conversion

- Vector adaptive per-pixel de-interlacing

- 3:2 pulldown (frame rate conversion)

- Seamless integration of pixel shaders with video in real time

- HDR tone mapping acceleration

- Maps any input format to 10 bit per channel output

- Flexible display support

- Dual integrated DVI transmitters (one dual-link + one single-link)

- DVI 1.0 compliant / HDMI interoperable and HDCP ready

- Dual integrated 10 bit per channel 400 MHz DACs

- 16 bit per channel floating point HDR and 10 bit per channel DVI output

- Programmable piecewise linear gamma correction, color correction, and color space conversion (10 bits per color)

- Complete, independent color controls and video overlays for each display

- High quality pre- and post-scaling engines, with underscan support for all outputs

- Content-adaptive de-flicker filtering for interlaced displays

- Xilleon™ TV encoder for high quality analog output

- YPrPb component output for direct drive of HDTV displays*

- Spatial/temporal dithering enables 10-bit color quality on 8-bit and 6-bit displays

- Fast, glitch-free mode switching

- VGA mode support on all outputs

- Drive two displays simultaneously with independent resolutions and refresh rates

- Dual integrated DVI transmitters (one dual-link + one single-link)

- Compatible with ATI TV/Video encoder products, including Theater 550

- High performance programmable video processor

- CrossFire™

-

- Multi-GPU technology

- Inter-GPU communication over PCI Express (no interlink hardware required)

- Four modes of operation:

- Alternate Frame Rendering (maximum performance)

- Supertiling (optimal load-balancing)

- Scissor (compatibility)

- Super AA 8x/10x/12x/14x (maximum image quality)

- Multi-GPU technology

- HyperMemory™ 2

-

- 2nd generation virtual memory management technology

- Improved PCI Express transfer efficiency

- Supports rendering to system memory as well as local graphics memory

- 2nd generation virtual memory management technology

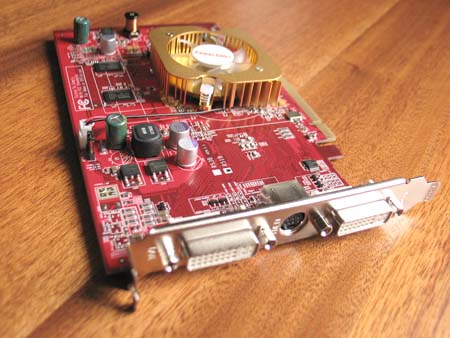

The Card: PowerColor X1600 XT

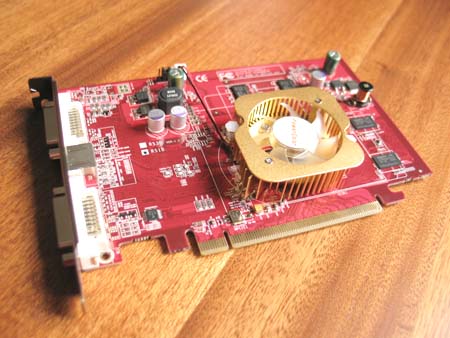

With high-end cards getting ridiculous big it’s quite often that users will go with less powerful graphics chip and get smaller boards instead. Usually middle and low-end cards are 1 slot designs with rather standard cooling. That is the case with PowerColor X1600 XT and X1300.

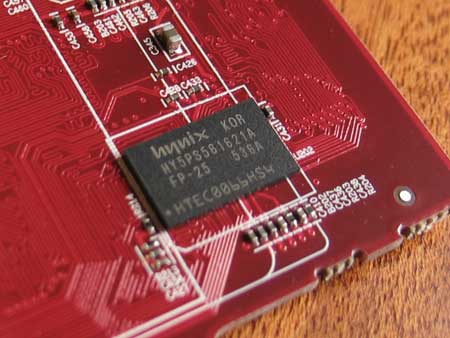

As for memory…well it’s not SAMSUNG though still good GDDR3 memory chips: Infineon HYB18H512321AF-14 (TFBGA-136 rated at 700 MHz). They overclock past that speed. More on that later.

The Card: PowerColor X1300

*Important update*

It has come to my attention that Tul does not carry a regular X1300 PRO. Instead it has its Bravo model with custom cooling device. The PRO model with golden heatsink you see on their product page does NOT exist.

What Tul is doing is they are providing customers with an OC BIOS. When you flash your regular PowerColor X1300 (400 MHz / 250 MHz) it will be clocked to PRO speeds (600 MHz / 400 MHz). This was exactly the card I have gotten — a non PRO flashed with PRO clockrates. What does that mean? Both PRO and non PRO models are equipped with the same type of memory, capable of 800 MHz DDR speeds. does NOT exist. Since I wasn’t aware of this fact, I assumed it was a PRO model based on the specifications on their website. The card I have received is indeed a retail product.

The low-end segment is probably the most popular one. OEMs tend to put in their systems rather slow cards to cut the cost of the whole package. We at Bjorn3D don’t review many of those cards, but along came the opportunity so I couldn’t resist.

Bundle: PowerColor X1600 XT

The scheme used on this product’s box is similar to that of PowerColor X1800 XT.

- Driver CD

- CyberLink DVD Solutions

- PowerDVD 5

- PowerProducer 2 Gold DVD

- PowerDirector 3

- Power2Go 3

- Medi@show 2

- Pacific Fighters full game

- Pacific Fighters Multi-Language Hot-Key Reference

- Quick installation guide

Accessories

- S-Video Cable

- Composite Cable

- 2 DVI-I connectors

- S-Video to AV connector

Bundle: PowerColor X1300 PRO

Let’s see how PowerColor packaged their X1300 card. If you’re looking for any games, you’ll be disappointed — you won’t find any at all.

Accessories

- Composite Cable

- DVI-I connectors

- Quick start manual

Software

- ATI drivers

Setup and Installation

All of our benchmarks were ran on Athlon64 3000+ clocked at 2.5GHz. I will stack PowerColor X1600 XT and X1300 against each other. Additionally I will throw in 3DMark05 scores from X700. Overclocking section will consist of 3DMark05 scores from different cards. The table below shows test system configuration as well benchmarks used throughout this comparison.

| Components | – DFI NF4 Ultra-D – Athlon64 3000+ Venice – 2x256MB Corsair PC3200LLP (Dual Channel) – Thermaltake 520 Watt PSU – PowerColor X1600 XT – PowerColor X1300 |

| Software | – Windows XP SP2 – DirectX 9.0c – nForce4 6.53 drivers – CATALYST 5.13 |

| Synthetic Benchmarks | – 3DMark 2005 v1.2.0 – D3D Right Mark 1.0.5.0 beta 4 |

| Gaming Benchmarks | – F.E.A.R / ingame benchmark + Fraps – Half-Life 2 / custom d13c17 timedemo + Fraps – Doom 3 / default timedemo + Fraps – NFS: Most Wanted / Fraps |

| Notes | CPU clocked at 2.5GHz |

Important CATALYST notes:

For X1600 XT

CATALYST A.I: standard

Mipmap detail level: quality

For X1300 PRO

CATALYST A.I: advanced

Mipmap detail level: quality

X1600 XT and X1300 together

Synthetic Benchmarks

3DMark05

I’ve used Futuremark’s 3DMark 2005 to measure the actual throughput of both PowerColor X1600 XT as well as X1300 and compared it against a few other candidates: X700 and X800 XT. Below are the results.

| Video card |

PowerColor X1600 XT | PowerColor X1300 |

Bravo X700 | ATi X800 XT |

| Pixel Fillrate | 2055,2 MPixels /sec |

1017,5 MPixels / sec | 957,1 MPixels / sec | 3284 MPixels / sec |

| Texel Fillrate | 2351,6 MTexels / sec |

1689,3 MTexels / sec | 3184 MTexels / sec | 7943 MTexels / sec |

| Geometry Rate | 590 MTriangles |

600 MTriangles |

400 MTriangles | 500 MTriangles |

| Vertex Shader – Simple | 73,2 MVertices / sec |

45,2 MVertices / sec | 32,5 MVertices / sec | 58 MVertices / sec |

| Vertex Shader – Complex | 47,1 MVertices / sec | 15,7 MVertices / sec | 34,8 MVertices / sec | 46 MVertices / sec |

| Pixel Shader (2.0b) | 113,4 FPS |

33,5 FPS | 53 FPS |

140 FPS |

With only 4 ROPs, X1600 XT does not perform as well as X700 which features 8 Render Output Pipelines and X800 XT sporting 16 of those. The obvious and staggering difference is texel fillrate which is even less than X700’s. Although RV530 has only 4 ROPs it features 12 Pixel Shader pipelines which help in PS intensive applications. X1300 also has 4 ROPs, but 4 Pixel Shader units instead.

RightMark

D3D RightMark is a very useful tool for measuring different theoretical throughputs of a graphics chip. I ran couple of synthetic tests to stress out X1600 XT / X1300. The main focus of theses tests will be to stress out Geometric Processing (Vertex Shading) as well as Pixel Shader units.

With D3D RightMark you will be able to get the following information about your video card:

- Features supported by your video card

- Pixel Fillrate and Texel Fillrate

- Pixel shader processing speed (all shader models)

- Vertex shader (geometry) processing speed (all shader models)

- Point sprites drawing speed

- HSR efficency

The difference between the two tested cards is pretty substantial. RADEON X1600 is aimed at the middle-end market while the X1300 is pushed through the low-end channel. PowerColor X1600 XT performs better by around 35-41%. Let’s see how those cards deal with pixel shading tests.

F.E.A.R

On August 5th this year, VUGames have released F.E.A.R demo to the public. The game is being made over at Monolith Productions studio and should be out later this year. Since almost everyone has been waiting for this demo, I’ve decided to give it a shot and bench it with X1600 XT and X1300 from PowerColor.

Texture caching in retail F.E.A.R has been improved a little. You won’t see a lot of chugging when abruptly turning around. Our benchmarking method was simple. I used default F.E.A.R benchmarking utility which nicely shows all effects and technology used throughout the game.

Game Overview

An unidentified paramilitary force infiltrates a multi-billion dollar aerospace compound, taking hostages but issuing no demands. The government responds by sending in special forces, but loses contact as an eerie signal interrupts radio communications. When the interference subsides moments later, the team has been obliterated. As part of a classified strike team created to deal with threats no one else can handle, your mission is simple: Eliminate the intruders at any cost. Determine the origin of the signal. And contain the crisis before it spirals out of control.

As you probably know, F.E.A.R uses a very sophisticated game engine (FEAR).

- Rendering

- FEAR is powered by a new flexible, extensible, and data driven DirectX 9 renderer that uses materials for rendering all visual objects. Each material associates an HLSL shader with artist-editable parameters used for rendering, including texture maps (normal, specular, emissive, etc.), colors, and numeric constants.

- Lightning Model

- FEAR features a unified Blinn-Phong per-pixel lighting model, allowing each light to generate both diffuse and specular lighting consistently across all solid objects in the environment. The lighting pipeline uses the following passes:

- Emissive: The emissive pass allows objects to display a glow effect and establishes the depth buffer to improve performance.

- Lighting: The lighting pass renders each light, first by generating shadows and then by applying the lighting onto any pixels that are visible and not shadowed.

- Translucency: The translucent pass blends all translucent objects into the scene using back to front sorting.

- FEAR features a unified Blinn-Phong per-pixel lighting model, allowing each light to generate both diffuse and specular lighting consistently across all solid objects in the environment. The lighting pipeline uses the following passes:

- Visual Effects

- FEAR features a new optimized, data driven effects system that allows for the creation of key-framed effects that can be comprised of dynamic lights, particle systems, models, and sounds. Examples of the effects that can be created using this system include weapon muzzle flashes, explosions, footsteps, fire, snow, steam, smoke, dust, and debris.

- Sample Lights

- FEAR’s lighting model is very flexible and allows developers to easily add new lights. Existing lights include:

- Point Light: The point light is a single point that emits light equally in all directions.

- Spotlight: Similar to a flashlight, the spotlight projects light within a specified field of view. The spotlight can also use a texture to tint the color of the lighting on a per pixel basis.

- Cube Projector: Similar to the point light, the cube projector uses a cubic texture to tint each lit pixel.

- Directional Light: This lighting is emitted from a rectangular plane and is used to simulate directional lights like sunlight.

- Point Fill: Although similar to the point light, the point fill is an efficient option because it does not utilize specular lighting or cast shadows.

- FEAR’s lighting model is very flexible and allows developers to easily add new lights. Existing lights include:

A more detailed overview of other F.E.A.R technologies can be found over at Touchdown Entertainment. These include: Havok Physics Engine and Modeling / Animations System.

Concentrating on scores, X1600 XT does 66 FPS at 8×6 (X1300 gets 47 FPS). I haven’t included scores at 16×12 for X1300 because they were…well…miserable. After all it’s a low-end card so you shouldn’t expect flawless performance — especially with F.E.A.R.

Half-Life 2

We all love Half-Life 2 and we all want best performance out of our hardware. This has to be one of the most graphic demanding games currently on the market. Half-Life 2 is built around Source engine which utilizes a very wide range of DirectX 8 / 9 special effects. Those include:

- Diffuse / specular bump mapping

- Dynamic soft shadows

- Localized / global valumetric fog

- Dynamic refraction

- High Level-of-Detail (LOD)

Note that users with DirectX 7 and older hardware (NVIDIA MX series for example) will not be able to enjoy the above effects. Let’s see how middle-end / low-end X1K graphic cards perform.

Doom 3

Now that we are past Doom 3s release, some gamers have been left with a bit of disappointment. Main reason is Half-Life 2 and its Source engine which really showed a vast amount of potential and scalability.

Although this game needs no introduction, I will go over some of the game features and technology behind Doom 3. It took the guys at id Software over four years to complete this project. Lead programmer, John Carmack spent an awful lot of time designing the game engine, but his hard work paid off — to some extent since this is first title which houses Doom 3 engine.

Let’s look at some of the engine tech features which are present in Doom 3:

- Unified lightning and shadowing engine

- Dynamic per-pixel lightning

- Stencil shadowing

- Specular lightning

- Realistic bumpmapping

- Dynamic and ambient six-channel audio

However you look at it, Carmack’s lightning engine is the essence of Doom 3. With OpenGL being the primary API, shaders have been put to a heavy use in order to create the realisticly looking environment. Instead of using lightmaps the game engine now processes all shadows in real-time. This technique is called stencil shadowing which can accurately shadow other objects in the scene. There are disadvantage to this method however:

- Requires a lot of fillrate

- Fast CPU is needed for shadow calculations

- Inability to render soft shadows

Talking about playable framerates, both GPUs receive fruitful results; RADEON X1600 XT performing better than X1300 by as much as 39% The last resolution was an overkill for poor low-end’er.

Need For Speed: Most Wanted

We have quite a few sequels in this review and this is another one, this time from Electronic Arts. If you’ve played NFS Hot Pursuit you know what I’m talking about. There are a lot of ideas taken out from the older NFS. The main difference between Most Wanted and Underground (in terms of graphics) is addition of HDR-type effects. It’s pseudo-HDR (more like bloom), but looks lovely nonetheless. Additionally the game sports flashy new reflections, better object geometry, improved lightning system and finally physics engine.

Here we have a fresh new title with bells and whistles waiting to get benched.

Overclocking and Conclusions

*Important update*

It has come to my attention that Tul does not carry a regular X1300 PRO. Instead it has its Bravo model with custom cooling device. The PRO model with golden heatsink you see on their product page does NOT exist.

What Tul is doing is they are providing customers with an OC BIOS. When you flash your regular PowerColor X1300 (400 MHz / 250 MHz) it will be clocked to PRO speeds (600 MHz / 400 MHz). This was exactly the card I have gotten — a non PRO flashed with PRO clockrates. What does that mean? Both PRO and non PRO models are equipped with the same type of memory, capable of 800 MHz DDR speeds. does NOT exist. Since I wasn’t aware of this fact, I assumed it was a PRO model based on the specifications on their website. The card I have received is indeed a retail product.

Our comparison is coming to an end. The last thing we need is to check how both cards overclock. As I mentioned on the previous pages both boards run their memory at maximum supported frequency — 700 MHz for X1600 XT and 400 MHz for X1300. As for core X1600 carries a 590 MHz burden. The little X1300 has been given a 600 MHz core at factory settings.

X1600 XT default: 590 MHz core / 700 MHz memory

X1600 XT OC: 640 MHz core / 770 MHz memory

X1300 PRO (modded) default: 600 MHz core / 400 MHz memory

X1300 PRO (modded) OC: 680 MHz core / 475 MHz memory

To give you an idea of how those results stack up I’ve prepared a little list of cards with default and OC values for your viewing pleasure. Best overclock so far is the X1800 XT.

Santa dropped us two cards under the christmas tree

Pros:

+ X1600 XT: Solid performance

+ X1600 XT: Good memory overclock

+ X1600 XT: Avivo support

+ X1300: On par performance in its class

+ X1300: Can be modded to match PRO speeds

+ X1300: Avivo support

+ X1300: Dual DVI

+ X1300: Good core overclock

+ X1300: Cheap

Cons:

– X1600 XT: Mediocre core overclock

– X1600 XT: Poor game bundle

– X1600 XT: A bit on the loud side

– X1300: Poor bundle

– X1600 XT & X1300: Late into the market

For solid performance, PowerColor X1600 XT and PowerColor X1300 get the rating of 8.5 (Very Good) out of 10 and Bjorn3D.com Seal of Approval Award.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996