Today we have the distinct pleasure of introducing the XFX’s rendition of NVIDIA’s 9600GT 512MB graphics solution. The model of the card we received for testing from XFX, the PV-T94P-YDF4, has the same specifications as NVIDIA’s reference standard card.

INTRODUCTION

As a general rule most computer product review sites have the mission in mind to release their reviews of a new product on or even before the manufacturer’s intended release date. We at Bjorn3D concur that in most cases that is the best strategy to take both from a business standpoint and more importantly for our reader’s enlightenment. We’ve been reviewing computer related products at Bjorn3D for well over a decade now and in that time we’ve learned some valuable lessons. One of those lessons is that there are those times when it is better to let the dust settle a little with some new products before presenting our take on them. The primary reason for using this approach is that it allows us to thoroughly investigate those issues that are certain to surface with any new product and present a much more comprehensive review to our readers.

Now the question for all of you computer enthusiasts is, Can You Say 9? We’ve heard for a while that the new 9000 series of NVIDIA® graphics solutions were just around the corner, and now that corner has been turned. With the 9000 series of graphics cards NVIDIA® has chosen a much different marketing approach than they this with their 8000 series. Instead of releasing their high-end components first as was the case with the 8800GTX and then over time filling in the blanks beneath it, NVIDIA® has opted to release their 9600GT first. We can only speculate that the 9600GT is the 9000 series equivalent to the 8600GT although the clock speeds are closer to that of the NVIDIA® reference 8800GT. Where this card fits in the 9000 series chain of products is also a bit of a mystery. Ah so many unanswered questions!

Today we have the distinct pleasure of introducing the XFX’s rendition of NVIDIA’s 9600GT 512MB graphics solution. The model of the card we received for testing from XFX, the PV-T94P-YDF4, has the same specifications as NVIDIA’s reference standard card. We have been assured by XFX that two other factory overclocked models will be available in the very near future. It has been our experience in testing a base model card such as this that often we’re able to achieve an excellent overclock, in many cases even superior to the factory overclocked models. We’ll soon see!

XFX: The Company

XFX, otherwise known as PINE Technologies, is a brand of graphics cards that have been around since 1989, and have since then made a name for themselves with their Double-Lifetime enthusiast-grade warranty on their NVIDIA graphics adapters and matching excellent end-user support.

XFX dares to go where the competition would like to, but can’t. That’s because, at XFX, we don’t just create great digital video components–we build all-out, mind-blowing, performance crushing, competition-obliterating video cards and motherboards. Oh, and not only are they amazing, you don’t have to live on dry noodles and peanut butter to afford them. XFX is a division of PINE Technologies, a leading manufacturer of state-of-the-art processing components. To learn more about PINE, click here.

FEATURES & SPECIFICATIONS

| XFX™ 9600GT Graphics Card Series Comparative Specifications |

||||

| Specification | XFX 9600GT* | NVIDIA 9600GT Reference | XFX 9600GT XT | XFX 9600GT XXX** |

| RAMDACs | Dual 400 MHz | Dual 400 MHz | Dual 400 MHz | Dual 400 MHz |

| Memory BUS | 256 bit | 256 bit | 256 bit | 256 bit |

| Memory | 512 MB | 512 MB | 512 MB | 512 MB |

| Memory Type | DDR3 | DDR3 | DDR3 | DDR3 |

| Memory Clock | 900 MHz (1800 MHz effective) | 900 MHz (1800 MHz effective) | 950 MHz (1900 MHz effective) | 1000 MHz (2000 MHz effective) |

| Stream Processors | 64 | 64 | 64 | 64 |

| Shader Clock | 1625 MHz | 1625 MHz | 1700 MHz | 1750 MHz |

| Clock Rate | 650 MHz | 650 MHz | 680 MHz | 700 MHz |

| Chipset | 9600 GT (G94) | 9600 GT (G94) | 9600 GT (G94) | 9600 GT (G94) |

| Bus Type | PCI-E 2.0 | PCI-E 2.0 | PCI-E 2.0 | PCI-E 2.0 |

| Fabrication Process | 65nm | 65nm | 65nm | 65nm |

| Highlighted Features | HDCP Ready Dual DVI Out RoHS HDTV ready SLI ready TV Out |

HDCP Ready Dual DVI Out RoHS HDTV ready SLI ready TV Out |

HDCP Ready Dual DVI Out RoHS HDTV ready SLI ready TV Out |

HDCP Ready Dual DVI Out RoHS HDTV ready SLI ready TV Out |

| Output | HDMI capable with added accessories | N/A | HDMI capable with added accessories | HDMI, HDMI upgrade kit included |

| MSRP | $179.99 | N/A | $199.99 | $214.99 |

| * Today’s review product | ||||

Features

- Unified Architecture

- Lumenex Engine

- 128 Bit FP HDR (High Dynamic Rendering)

- GIGA Thread: Batch processing / Load Balancing

- Quantum Engine: Embedded Physics features

- DirectX 10 support

- SLI Support

- HDCP Capable

- Dual-Link DVI

- HDMI Capable with the use of HDMI Certified components

- HDMI Certified (XXX Edition)

- Double Lifetime Warranty

PACKAGING

XFX has found an excellent packaging system which they have utilized with the last several graphics cards we have received from them. It’s large enough to hold all of the valuable contents with some room to spare. Many of the graphic solutions distributors are still using packaging containers which are entirely too large and in our judgment are simply wasting material which is helping to overcrowd our landfills.

|

|

The interior package offers excellent protection for the graphics card it contains. The card is surrounded by approximately 2 inches of cellular foam on three sides and by .25 inches of the same cellular foam on top. The foam is cut to fit the exact dimensions of the card and firmly secure the card during transit.

|

CONTENTS

So many of the graphics cards manufacturers had decreased the bundled accessories that they offer with their graphics solutions. Back in the day it was not unusual to get everything supplied today but also as many as two full edition games. We fully understand that manufacturing costs have risen and realize that this is the base model graphics card but feel at least one game could have been included in the accessories. It is our understanding that Company of Heroes is included with the XXX version of this card.

- 1 – XFX Model PV-T94P-YDF4 9600GT

- 1 – DVI-VGA Connectors

- 1 – S-Video Adapter Cable

- 1 – HDTV Dongle

- 1 – Molex to PCI-e power adapter

- 1 – Quick Start Manuals

- 1 – Driver CD

Bundled Accessories

THE CARD & IMPRESSIONS

After removing the XFX 9600GT from its protective packaging and taking a few minutes to review its aesthetics my first impression was that it is identical in virtually all respects to the 8800GT. The only subtle difference that we noticed between the two was the logo used on the top of the cooler. The 9600GT uses what we’ll call for lack of better terms the “9” logo where the 8800GT uses XFX’s now famous “Alpha Dog” logo. They both even utilize the same green PCB.

|

|

|

As we’ve learned after many trials with graphics cards it’s not what’s on the outside that counts. We probably make the least inroads into the aesthetics of products we review than any computer products review site on the Web. This is because we strongly feel that you’re not paying for looks, you’re paying for performance.

|

|

|

At this juncture in most of our reviews we normally try and expound on the technical workings of the graphics card that we are reviewing. To put it plainly the release of the 9600GT (G94) has been one of the least ostentasious that we can remember and the technical documentation is sparse at best. There are no diagrams of the 9600GT MCP or other technical data readily available for the press. For that reason the specifications and features that we published earlier in the review are literally all we have to comment on.

TESTING REGIME

We will run our captioned benchmarks with each graphics card set at default speed. All of our synthetic and gaming tests will be run at the 1280 x 1024, 1600 x 1200, and 1920 x 1200; some without Antialiasing and Anistropic Filtering and others using various level of this eye candy. For those games that offer both DX9 and DX10 rendering we’ll test both. Each of the tests will be run individually and in succession three times and an average of the three results calculated and reported. F.E.A.R. benchmarks were also run with soft shadows disabled.

As the chart below shows the XFX 9600GT’s stock statistics don’t quite match up to the other three cards, nonetheless this should give us a good barometer of the card’s performance.

| Comparative Specifications | ||||||

| Specification | XFX 9600GT | GIGABYTE 8800GT TurboForce | ASUS EN8800GTS TOP | XFX 8800GT XXX | XFX 8800GTX XXX | |

| Memory | 512 MB | 512 MB | 512 MB | 512 MB | 768 MB | |

| Memory Clock | 1.80 GHz | 1.84 GHz | 2.07 GHz | 1.95 GHz | 2.00 GHz | |

| Stream Processors | 64 | 112 | 128 | 112 | 128 | |

| Shader Clock | 1625 MHz | 1700 MHz | 1800 MHz | 1700 MHz | 1500 MHz | |

| Clock Rate | 650 MHz | 700 MHz | 740 MHz | 670 MHz | 630 MHz | |

| Test Platform | |

| Processor | Intel Q6600 Core 2 Quad @ 2.4GHz |

| Motherboard | ASUS Maximus Formula(Non-SE) X38, BIOS 0907 |

| Memory | 4GB of Mushkin XP-2 6400 DDR-2 4-3-3-10 |

| Drive(s) | 2 – Seagate 1TB Barracuda ES SATA Drives |

| Graphics | Video Card # 1: XFX® GeForce® 9600GT running ForceWare 174.12 64-bit Video Card # 2: GIGABYTE™ GeForce® 8800GT TurboForce running ForceWare 169.25 64-bit WHQL Video Card # 3: ASUS® XFX GeForce® EN8800GTS TOP running ForceWare 169.25 64-bit WHQL Video Card # 4: XFX GeForce® 8800 GT XXX running ForceWare 169.25 64-bit WHQL Video Card # 5: XFX GeForce® 8800 GTX XXX running ForceWare 169.25 64-bit WHQL |

| Cooling | Enzotech Ultra w/ 120mm Delta Fan |

| Power Supply | Antec 650 Watt Neo Power & Antec 550 Watt Neo Power |

| Display | Dell 2407 FPW |

| Case | Antec P190 |

| Operating System | Windows Vista Ultimate 64-bit SP1 RC1 |

| Synthetic Benchmarks & Games | |

| 3DMark06 v. 1.10 | |

| Call of Heroes v. 1.71 DX 9 & 10 | |

| Crysis v. 1.1 DX 9 & 10 | |

| World in Conflict Demo DX 9 & 10 | |

| F.E.A.R. v 1.08 | |

| Serious Sam 2 v. 2.068 | |

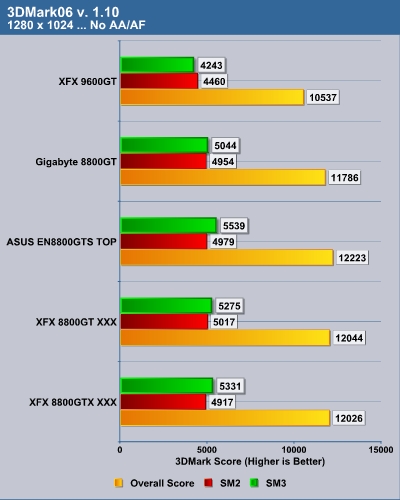

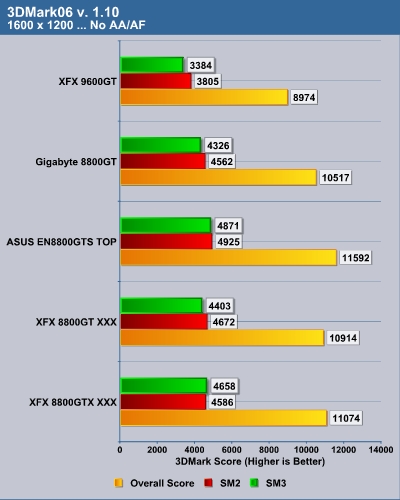

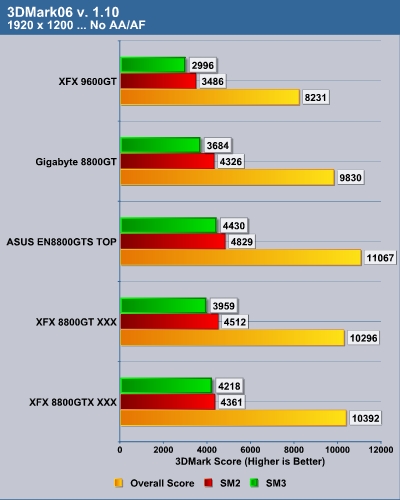

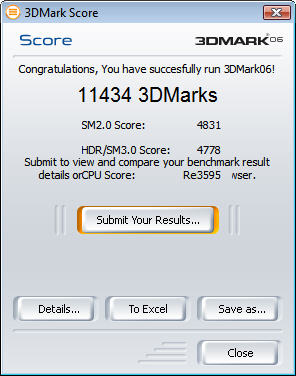

3DMARK06 v. 1.1.0

3DMark06 developed by Futuremark is a synthetic benchmark used for universal testing of all graphics solutions. 3DMark06 features HDR rendering, complex HDR post processing, dynamic soft shadows for all objects, water shader with HDR refraction, HDR reflection, depth fog and Gerstner wave functions, realistic sky model with cloud blending, and approximately 5.4 million triangles and 8.8 million vertices; to name just a few. The measurement unit “3DMark” is intended to give a normalized mean for comparing different GPU/VPUs. It has been accepted as both a standard and a mandatory benchmark throughout the gaming world for measuring performance.

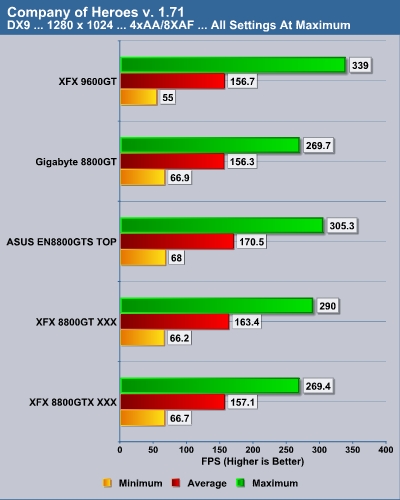

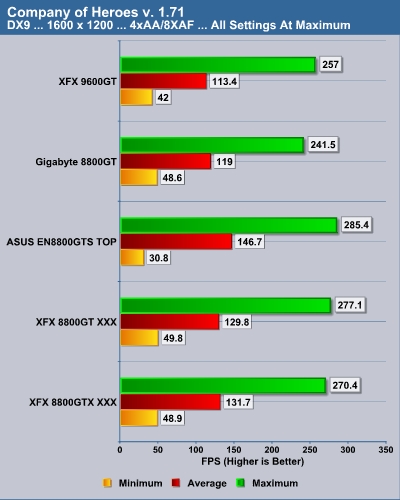

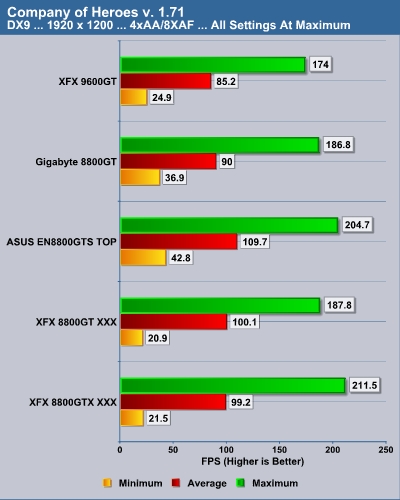

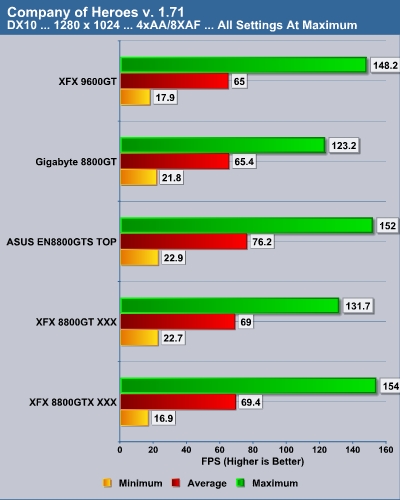

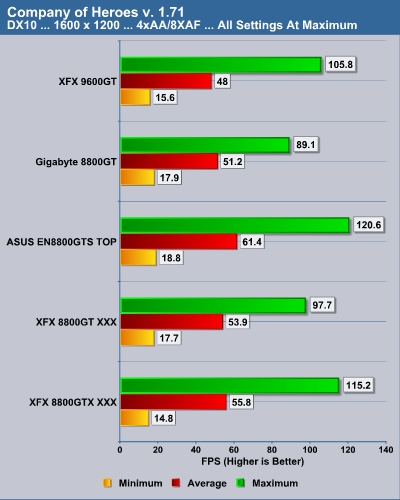

COMPANY OF HEROES v. 1.71

Company of Heroes(COH) is a Real Time Strategy(RTS) game for the PC, announced on April, 2005. It is developed by the Canadian based company, Relic Entertainment, and published by THQ. We gladly changed from the first-person shooter based genres of the rest of our gaming benchmarks to this game which is RTS. Why? COH is an excellent game that is incredibly demanding on system resources thus making it an excellent benchmark. Like F.E.A.R. the game contains an integrated performance test that can be run to determine your system’s performance based on the graphical options you have chosen. It uses the same multi-staged performance ratings as does the F.E.A.R. test. We salute you Relic Entertainment!

Note: After discussing it numerous times with my fellow reviewers, we decided to run this benchmark with Vsync disabled to prevent being locked in the 60fps range. Easy huh? Well all you generally have to do is go into the NVIDIA control panel and globally force Vsync off. Notice I said generally, as that didn’t work for us in this test arena. After uninstalling and reinstalling drivers and trying everything in our bag of tricks we turned to the Internet. We read some posts on various forums of people having the same issues or similar issues with Vista. Well we’re not jus using Vista 64 we’re also using SP1 RC1 which heretofore hadn’t show any problems. To cut to the chase we tried a solution we use to use back in days gone by we used the “-novsync” command at the end of the target in the program icon and voila it worked. We’re just passing this along as a tip in case you should have similar dilemma.

DX9

DX10

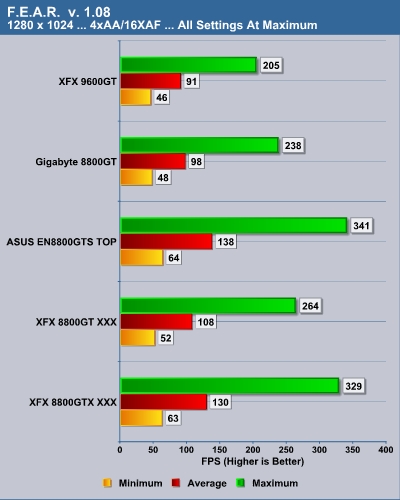

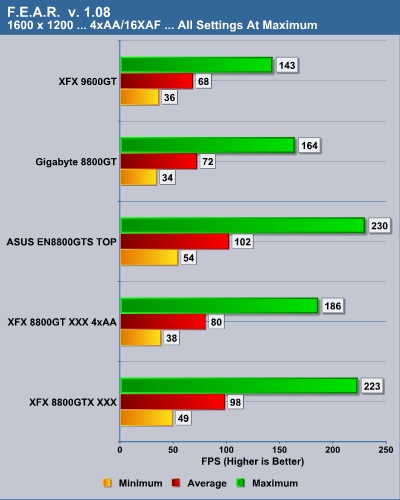

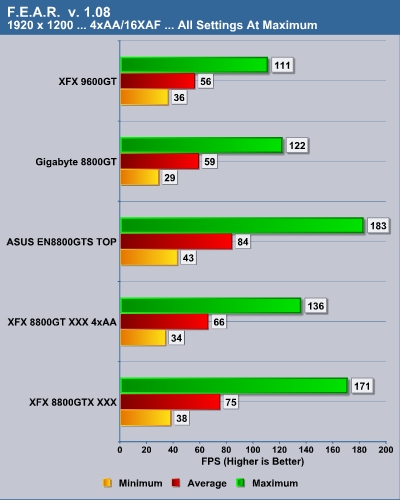

F.E.A.R v. 1.08

F.E.A.R. (First Encounter Assault Recon) is a first-person shooter game developed by Monolith Productions and released in October, 2005 for Windows. F.E.A.R. is one of the most resource intensive games in the FPS genre of games ever to be released. The game contains an integrated performance test that can be run to determine your system’s performance based on the graphical options you have chosen. The beauty of the performance test is that it gives maximum, average, and minimum frames per second rates and also the percentage of each of those categorical rates your system performed. F.E.A.R. rocks both as a game and as a benchmark!

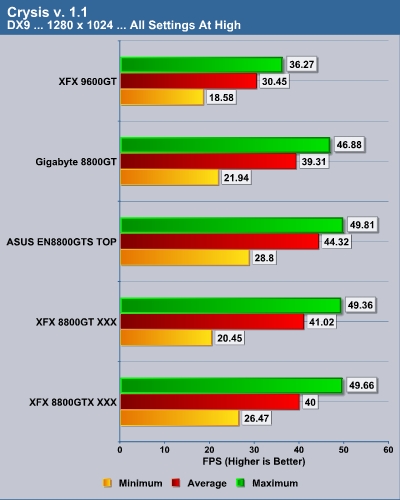

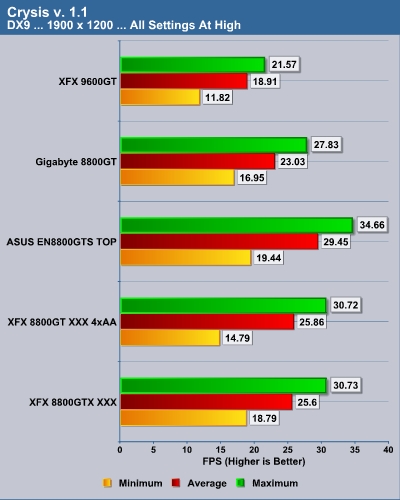

CRYSIS v. 1.1

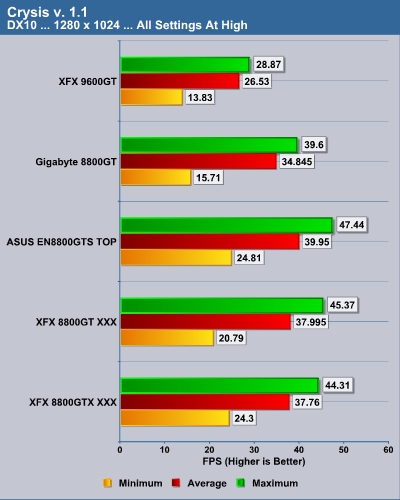

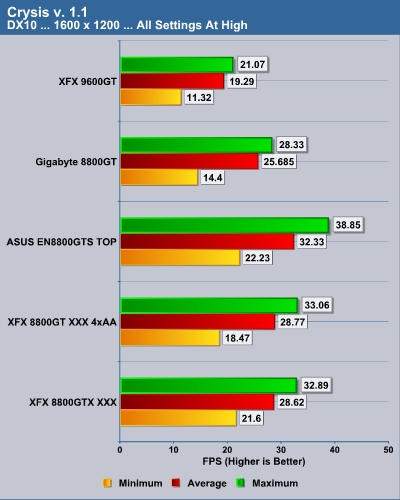

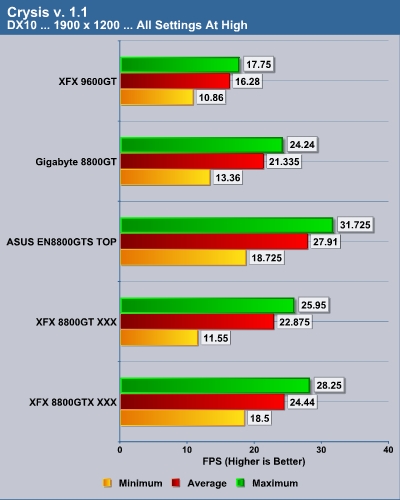

Crysis is the most highly anticipated game to hit the market in the last several years. Crysis is based on the CryENGINE 2 developed by Crytek. The CryENGINE 2 offers real time editing, bump mapping, dynamic lights, network system, integrated physics system, shaders, shadows and a dynamic music system just to name a few of the state of-the-art features that are incorporated into Crysis. As one might expect with this number of features the game is extremely demanding of system resources, especially the GPU. We expect Crysis to be a primary gaming benchmark for many years to come.

DX9

DX10

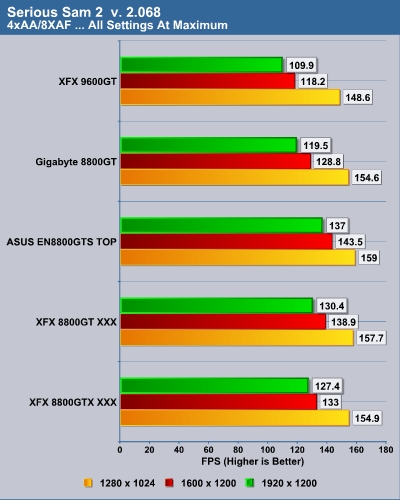

SERIOUS SAM 2 v. 2.068

Serious Sam 2 is a first-person shooter released in 2005 and is the sequel to the 2002 computer game Serious Sam. It was developed by Croteam using an updated version of their Serious Engine known as “Serious Engine 2”. We feel this game serves as an excellent benchmark which provides a variety of challenges for the the GPU/VPU you are testing. We once again automate the benchmarking process by using benchmarking software from HardwareOC to automate and refine the process.

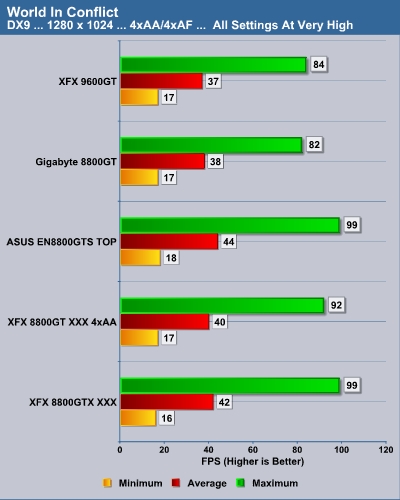

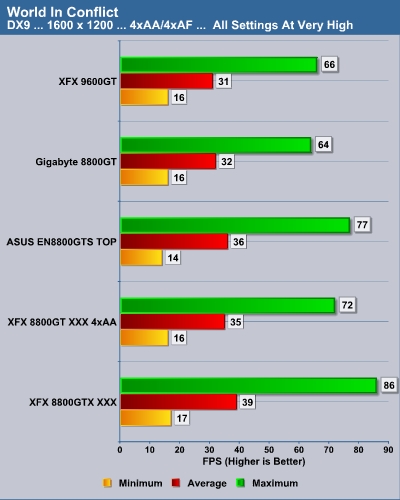

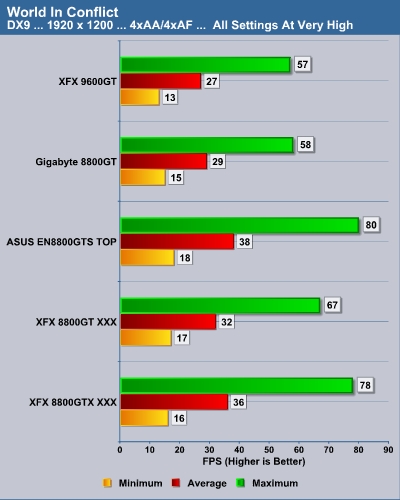

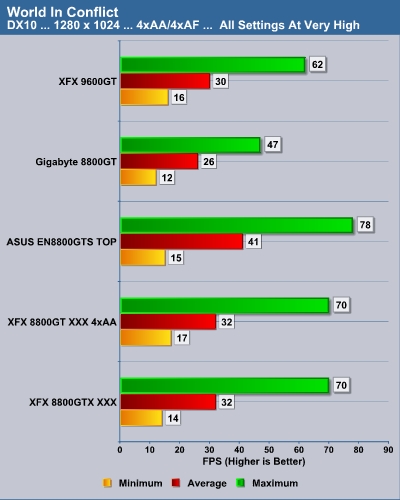

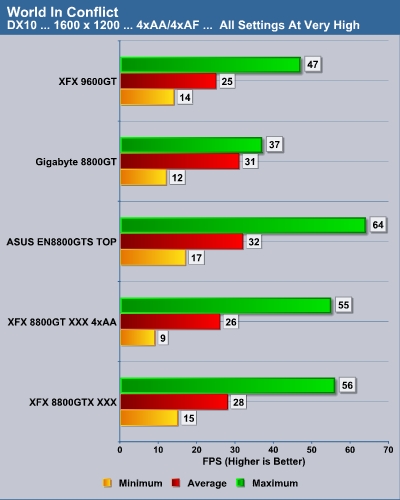

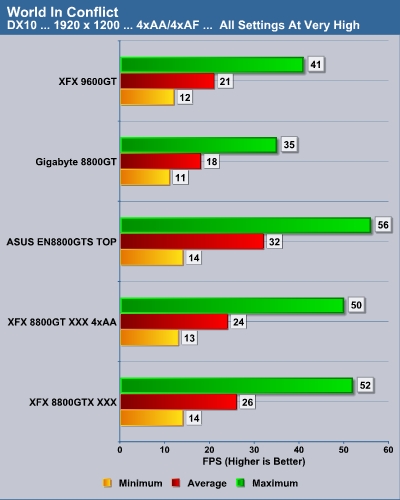

WORLD IN CONFLICT DEMO

World in Conflict has superb graphics, is extremely GPU intensive, can be rendered in both DX9 and DX10, and has built-in benchmarks. Sounds like a match made in heaven for benchmarking!

DX9

DX10

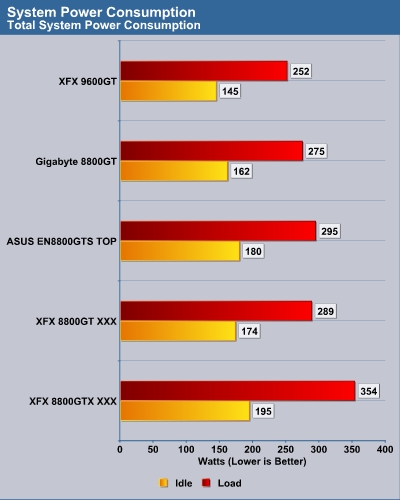

POWER CONSUMPTION

To measure power we used our Seasonic Power Angel a nifty little tool that measures a variety of electrical values. We used a high-end UPS as our power source to eliminate any power spikes and to condition the current being supplied to the test systems. The Seasonic Power Angel was placed in line between the UPS and the test system to measure the power utilization in Watts. We measured the idle load after 15 minutes of totally idle activity on the desktop with no processes running that mandated additional power demand. Load was measured after looping 3DMark06 for 15 minutes at maximum settings with all the eye candy turned on.

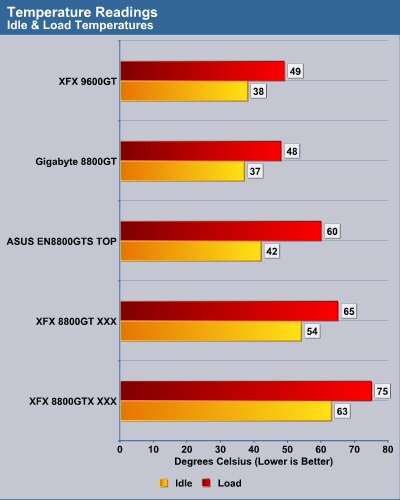

TEMPERATURE

The temperatures of the cards tested were measured using Everest Ultimate Edition v. 4.20.1170 to assure consistency and remove any bias that might be interjected with the respective card’s utilities. The temperature measurements used the same process for measuring “idle” and “load” capabilities as we did with the power consumption measurements.

All we can say is this must be one extremely cool running GPU as the NVIDIA reference cooler is certainly not noted for being the best at keeping these cards this cool. We were only able to break the 50 degree Celsius level when getting into some extreme overclocking of this card and then 52 degrees was the highest mark we saw. We should also note that in RivaTuner we noticed the fan settings for this card were set to idle at only 35% of the maximum in 2D, low power 3D and perfomance 3D. We didn’t ever notice the fan ramping up its RPMS throughout our testing. To be fair we tested the XFX 9600GT in the Antec P190 case which has a 200mm fan blowing almost directly on the GPU. The fan speed of the 200mm fan was set at low throughout all of our testing. We did not even hear the fan noise from this card over the existing case fans and the fan on our HSF.

OVERCLOCKING

As evidenced in our testing the XFX 9600GT is on average about 10% – 15% slower than its closest rival in our comparison, the Gigabyte 8800GT TurboForce. Again we’re not comparing apples to apples as the MCP/GPU, core clock, shader clock, and memory clock of the two cards are different. As in any review our goal is to push these products to their limit and back off just a bit to enforce stability. We found the XFX 9600GT to be an excellent overclocking graphics card with some “Easter Eggs” we had not originally bargained on.

Prior to beginning our overclock testing of the XFX 9600GT we had the pleasure of reading the article “NVIDIA’s shady trick to boost the GeForce 9600GT” over at TechPowerUp.com. We had noticed a similar aberrancy when we first checked our card with RivaTuner v. 2.06. The image below shows a core clock reading of 702MHz in RivaTuner with the card at the stock core clock setting of 650MHz.

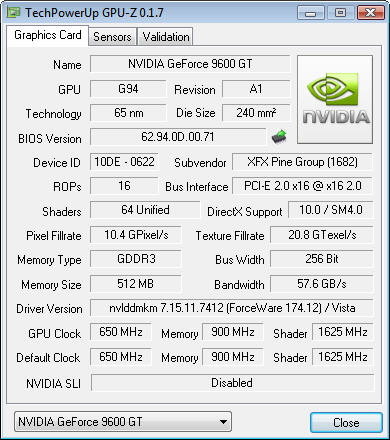

Stock Settings Shown in GPU-Z

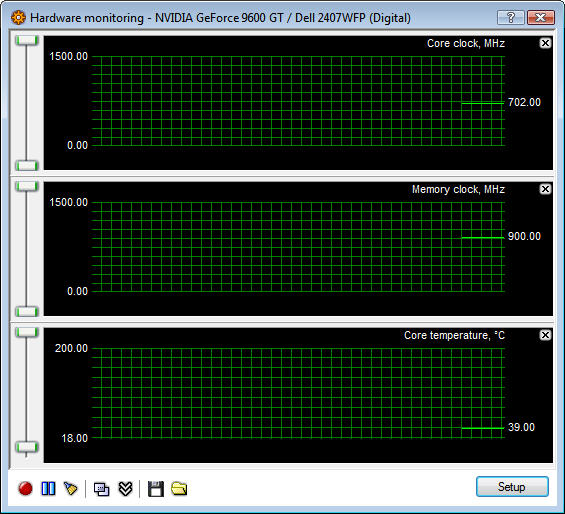

Stock Core Clock Reading in RivaTuner

We pursued the overclocking of this card as we would with any other and were able to achieve what we consider phenomenal results. We were able to achieve a core clock of 740MHz, a shader clock of 1850MHz, and a memory clock setting of 2100MHz. When we again checked the core clock RivaTuner we found much to our surprise that were pushing close to 800MHz with this card.

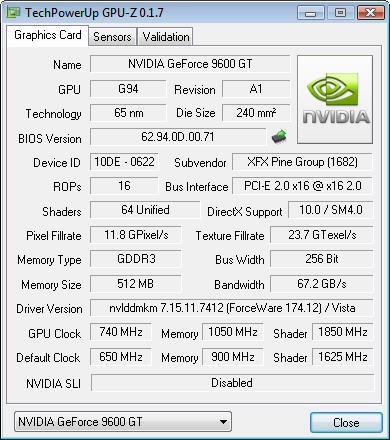

Overclocked Settings Shown in GPU-Z

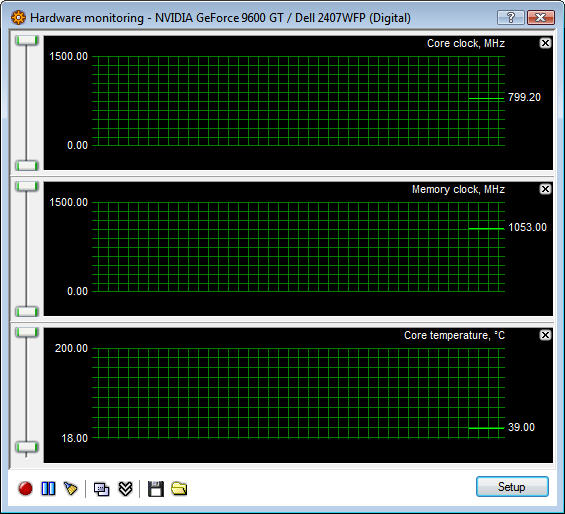

Overclocked Core Clock Reading in RivaTuner

3DMark2006 Results at Overclocked Settings

We must say after our experience that we agree entirely with the assertions made in the article at TechPowerUp with one small exception, the conclusion. We prefer to view this revelation with more of an optimistic mindset, in other words we are getting upper midrange performance we initially didn’t expect from a graphics card priced in a considered to be more in the budget category. It’s sort of like the glass of water being half full or half empty; it all depends on your perspective.

CONCLUSION

As we alluded to in the introduction to this review, there are times with new products when it’s better to learn to let the dust settle a bit before releasing the review for all to see. In the case of the XFX 9600GT in general and the NVIDIA 9600GT in specific, this was certainly one of those times especially given the scarcity of solid technical information and diagrams regarding this entire line of graphics solutions. We say this only because we want to give the best possible information to our readers in the timeliest manner possible.

Given the $179.99 retail price, the XFX 9600GT is considered by most to be an entry level card in NVIDIA’s newest 9000 series. With that being the case this card has stellar performance averaging framerates within 10% of the 8800GT and in some benchmarks even slightly better. Some of the computer enthusiasts out there might initially turn their nose up as this card is certainly not at the top of the heap. I say to you, read some of the reviews out there where this series of card was run in SLI mode and you might change your mind. The research we did prior to writing this article included reading several excellent SLI reviews utilizing the 9600GT. Their results showed some of the best scaling in SLI mode of any card we’ve ever seen. In many cases it was as much as an 80% improvement over the single card’s performance which heretofore was almost unheard of. Unfortunately, we only had one review sample and cannot include our own SLI results in this review.

Another very positive thing to take into consideration with the XFX 9600GT is the fact that this is the coolest card we’ve tested to date that utilizes the standard NVIDIA reference cooler. At standard factory settings we found the fan on the reference cooler to be almost inaudible. Power consumption also showed a 10% – 15% decrease as compared to the 8800GTs in our test array. Let us not forget overclocking which was in the top two for all graphics cards we’ve tested.

The XFX 9600GT is an all around excellent card that will offer any consumer excellent results for a minimal investment. Unless the factory overclocked cards offer some incredible and unforeseen ability that we can’t at this time imagine, we see no need in spending the extra $$$ for one given this card’s inherent overclocking ability. Given the minimalist approach with the included accessories we would have definitely liked to see the HDMI upgrade kit included (this kit is included only with the XXX version of the card). We would have also liked to see support for the forthcoming release of DirectX 10.1 and Shader 4.1.

In closing we can heartily recommend this card to almost any consumer looking for excellent performance at a minimal investment. The XFX 9600GT should be more than up to the task of supporting the vast majority of user’s needs. For the consumer enthusiast who needs the fastest card on the block, Google some of these 9600GT SLI reviews I referred to earlier and I think you’ll be quite impressed as well.

Pros:

+ Extremely quiet

+ Excellent performance compared to the cost

+ SLI™ certified

+ Excellent overclocking capabilities

+ Extremely cool even when overclocking

+ Low power consumption

+ Double lifetime warranty

Cons:

– Doesn’t support the forthcoming release of DirectX 10.1 and Shader 4.1

– Accessories to fully support HDMI cost extra

Final Score: 8.5 out of 10 and the Bjorn3D Seal of Approval.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996