Well today NVIDIA and its board partners are introducing NVIDIAs new high-end GPU the 9800GX2. We should actually just call this NVIDIAs new card since like AMD/ATIs 3870X2 this is a card using 2 GPUs. NVIDIA has been down this road before with the 7950GX2 of 2 years ago.

INTRODUCTION

Well today NVIDIA and its board partners are introducing NVIDIA’s new high-end GPU the 9800GX2. We should actually just call this NVIDIA’s new card since like AMD/ATI’s 3870X2 this is a card using 2 GPU’s. NVIDIA has been down this road before with the 7950GX2 of 2 years ago.

At this time NVIDIA is now bringing the 9800GX2 out to replace its flagship product the 8800 Ultra which until today was still the fastest card out. We also want to point out that the 8800 Ultra XXX from XFX which we will test against today sold for $889.00 when launched about a year ago at this time. The XFX 9800GX2 we should see selling for from $599.00 to $649.00 e-tail.

XFX the Company

XFX, otherwise known as PINE Technologies, is a brand of graphics cards that have been around since 1989, and have since then made a name for themselves with their Double-Lifetime enthusiast-grade warranty on their NVIDIA graphics adapters and matching excellent end-user support.

XFX dares to go where the competition would like to, but can’t. That’s because, at XFX, we don’t just create great digital video components–we build all-out, mind-blowing, performance crushing, competition-obliterating video cards and motherboards. Oh, and not only are they amazing, you don’t have to live on dry noodles and peanut butter to afford them.

XFX is a division of PINE Technologies, a leading manufacturer of state-of-the-art processing components. To learn more about PINE, click here.

FEATURES & SPECIFICATIONS

| XFX™ 9800GX2 Technical Specifications |

|

| Number of Transistors | 1508 Million |

| Memory BUS | 256 bit |

| Memory | 1024 MB |

| Memory Type | DDR3 |

| Memory Clock | 1000 MHz (2000 MHz effective) |

| Stream Processors | 256 |

| Shader Clock | 1500 MHz |

| Clock Rate | 600 MHz |

| Total Memory Bandwidth | 128GB (G94) |

| Bus Type | PCI-E 2.0 |

| Fabrication Process | 65nm |

| ROPs | 32 |

| Texture Filtering Rate | 76.8 Giga Texels/sec |

| HDCP Support | Yes |

| HDMI Support | Yes |

| Connectors | 2 – Dual-Link DVI 1 – HDMI |

| Power Connectors | 1 – 6 pin 1 – 8 pin |

| Max Board Power | 197 Watts |

| GPU Thermal Threshold | 105° Celsius |

| Form Factor | Dual Slot |

| Fabrication Process | 65nm |

Features

- Unified Architecture

- Lumenex Engine

- 128 Bit FP HDR (High Dynamic Rendering)

- GIGA Thread: Batch processing / Load Balancing

- Quantum Engine: Embedded Physics features

- DirectX 10 support

- SLI Support

- HDCP Capable

- Dual-Link DVI

- HDMI Capable with the use of HDMI Certified components

- HDMI Certified

- Double Lifetime Warranty

PACKAGING

XFX has improved their packaging over the old days when they used to ship items in an X shaped box. Today it’s shipped in a rectangle box with foam inside that is then cut to fit the card perfectly. This ends up giving us two inches of foam protection around the card and a ½ inch of foam on the top of the card.

CONTENTS

XFX 9800GX2

2x DVI Connectors

1 Molex to 6 pin power connector

1 Company of Heroes with DX 10 Update Disk

Installation Manual

Quick Install Guide

Driver Disc

1 SPDIF Cable

THE CARD

After opening the box and pulling out the card I can say I was very impressed by its looks. This is one very classy looking card. The entire card is in a casing that covers and protects the important components. I for one am glad to see this happening. How many horror stories have we read of an end user breaking a component of a card. The card is finished in a nice glossy black and with an XFX art work on top of the card.

We can see the SLI connector is even enclosed until you are ready to try Quad SLI. The power connectors, with one being 8 pin and one being 6 pin, are even lower in the cards enclosure. The power connectors even light up when plugged in correctly and the machine is powered up. There are even lit LEDs on the top backside of the card when powered up.

TEST SETUP

The system I am using for testing is a what I call a real world system. That means it’s in a case, it has a security suite, instant messenger, and various other software that a normal user would use on their machine. To me, reviewing on what I call a real world machine is very important to me. Just ask yourself, when was the last time that you played a game or used your machine daily that was spread all over a bench and not running any security software at all? Don’t think very many of you do.

| Test Platform | |

| Processor | Intel QX9650 @ 4GHz |

| Motherboard | ASUS P5E3 Deluxe, BIOS 1001 |

| Memory | 4GB of Corsair DDR 3 12800 @ 1600MHz |

| Drive(s) | 2 – Seagate 7200.11 1TB Barracuda 1 – Seagte 7200.10 750GB |

| Graphics | Video Card # 1: XFX® GeForce® 9800GX2 running ForceWare 174.53 64-bit Video Card # 2: XFX® GeForce® 8800 Ultra XXX running ForceWare 169.21 64-bit WHQL |

| Cooling | CoolIT Freezone |

| Power Supply | PC Power and Cooling 1200 |

| Display | Dell 2707 FPW |

| Case | Lian-Li 2000B Plus |

| Operating System | Windows Vista Ultimate 64-bit |

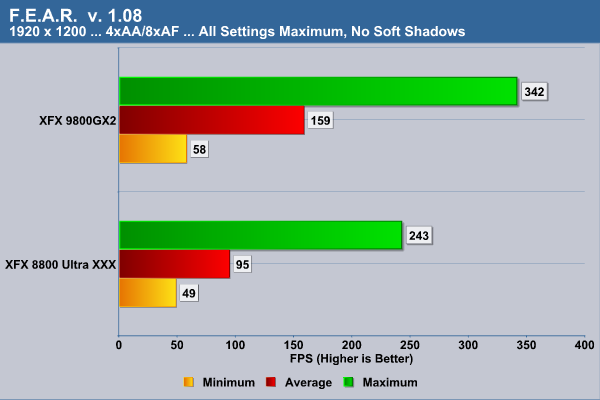

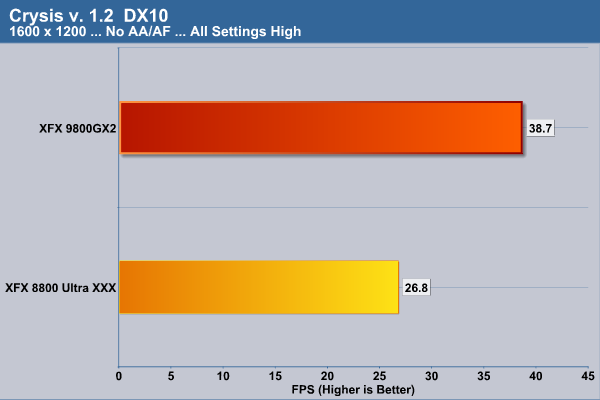

At this stage of the game, high-end graphic cards are meant to be run at 1920×1200 or higher resolution. Running them at a lower resolution is rarely needed and I have found only one game. That game being Crysis and that one I run daily at 1600×1200 instead of 1920×1200 and will bench it at the 1600×1200 resolution. The card we are going to test it against is a XFX 8800 Ultra XXX. I had hoped to have an ATI X2 card to compare to but could not get one in time. From what we have seen on the web from other sites the 8800 Ultra XXX is still a faster card than the 3870X2 so this should be a good battle to see if the 9800GX2 is the new king of the hill.

| Comparative Specifications | ||

| Specification | XFX 9800GX2 | XFX 8800 Ultra XXX |

| Memory | 1024 MB | 768 MB |

| Memory Clock | 2.0 GHz | 2.0 GHz |

| Stream Processors | 256 | 128 |

| Shader Clock | 1500 MHz | 1667 MHz |

| Clock Rate | 600 MHz | 675 MHz |

| Synthetic Benchmarks & Games | |

| 3DMark06 v. 1.10 | |

| Company of Heroes v. 1.71 DX 10 | |

| Crysis v. 1.2 DX 10 | |

| World in Conflict DX 10 | |

| F.E.A.R. v 1.08 | |

| Half Life Lost Coast | |

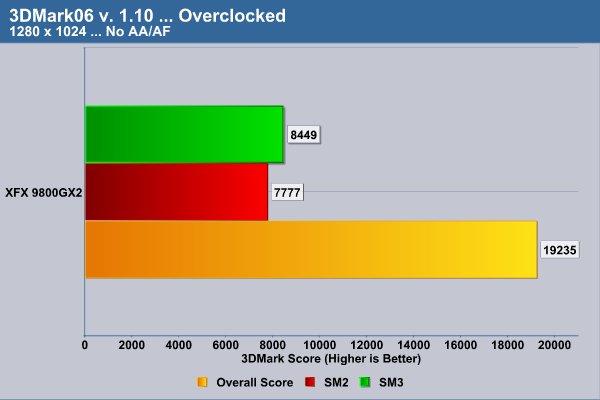

Overclocking

| Overclocked Specifications | ||

| Specification | XFX 9800GX2 | |

| Memory Clock | 2.106 GHz | |

| Shader Clock | 1652 MHz | |

| Clock Rate | 661 MHz | |

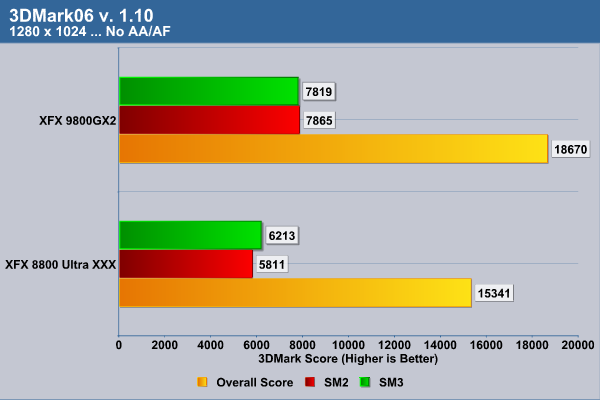

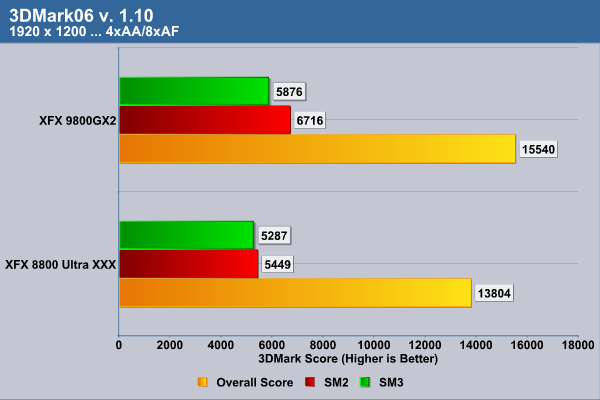

3DMark 2006

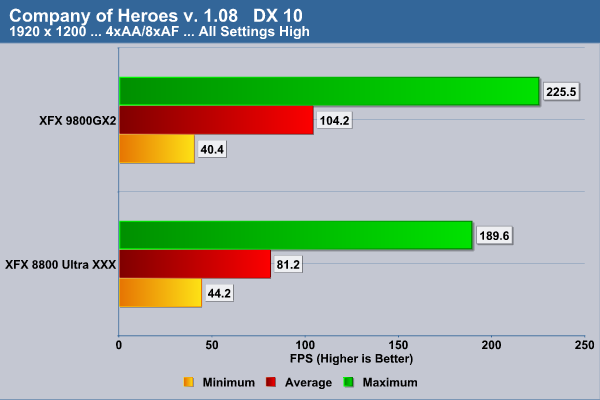

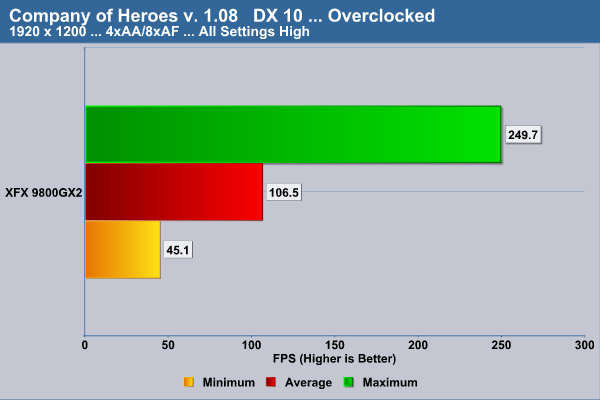

Company of Heroes

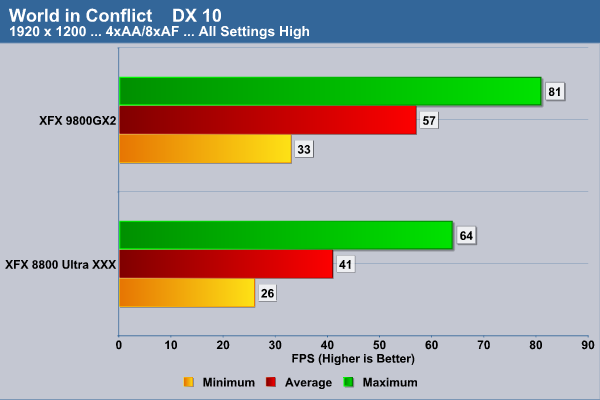

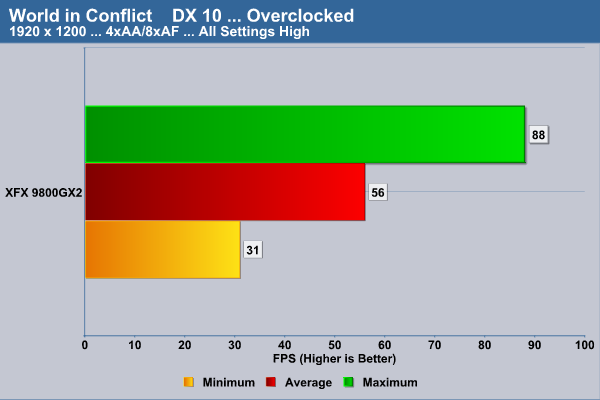

World in Conflict

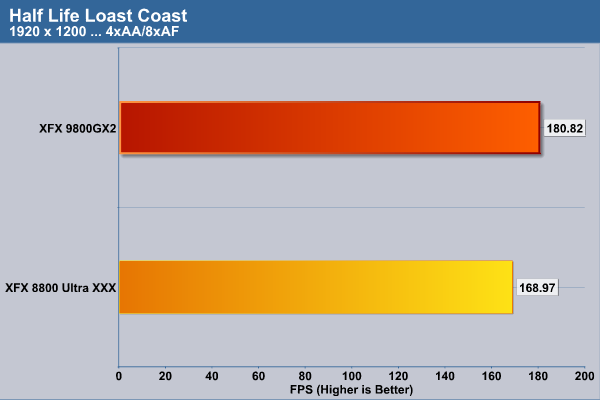

HALF-LIFE 2: LOST COAST

F.E.A.R.

Crysis

POWER

To measure the power draw I left the machine at idle with the monitor off. Then, I turned on the monitor, started the Crysis benchmark, and shut off the monitor to measure load levels. All test readings where taken from the wattage draw reading on my battery backup.

| Power Draw |

||

| Idle | Load | |

| XFX 9800GX2 | 368 | 501 |

TEMPS

The temps readings are being taken from RivaTuner 2.08. The temps where taken at idle and then thru the Crysis benchmark.

| Temperature | ||

| Specification | Idle | Load |

| Core 1 |

65º C | 74ºC |

| Core 2 |

67ºC | 75ºC |

VIDEO PLAYBACK

To look at the how well the graphics card engine ran 1080p videos I put it up against a 7800 512 GTX and watched Hi-Def videos keeping track of overall CPU usage percentage on my machine. I looked at over 10 different videos on both cards, took scores, and came up with the following CPU average.

| Video Playback |

||

| Avg. CPU Usage |

||

| XFX 9800GX2 | 13% | |

| 7800 GTX 512MB |

37% | |

CONCLUSION

The XFX 9800GX2 so far has left a good impression in my mind. Of course, only having it for a couple of days and having to release a review did not let me play with it as much as I would have liked to. I did run some other games on it including Microsoft’s demanding Flight X Acceleration at 1920×1200 and the game was much more fluid than on the 8800 Ultra XXX. The biggest benefit of the card for me right now is I have SLI power on a non NVIDIA chipset. We at Bjorn3D hope to soon get ahold of a 790I motherboard from NVIDIA and try Quad SLI with the product.

Many people have been dinging this card in leaked reviews on its performance. Bjorn3D does not have that complaint. If you consider that the card it is beating today sold for $889.00 USD when it was new and that this card is going for $599.00-$649.00 USD, we can’t complain. Bjorn3D is hoping to soon have an Asus 3870X2 and an Asus 9800GX2 to compare to each other to give you an interesting head to head of NVIDIA’s competition to this product.

Pros:

+ SLI in single card solution

+ Good performance

+ Low power consumption for dual card

+ Double life time warranty

+ Ready for Quad SLI

Cons:

– Price may turn some off

– Power Connectors could be hard for some to disconnect

– Doesn’t support the forthcoming release of DirectX 10.1 and Shader 4.1

Final score: The XFX 9800GX2scores a 8.5 (Very good) out of 10.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996