As technology marches on new products are introduced all the time. Some as soon as they’re developed while others are introduced at strategic intervals. The 9800 GTX+ is just that product. Built on a 55nm process and offered at an MSRP that will turn heads, NVIDIA has ATI dead in its sights.

INTRODUCTION

A funny thing happened the other day. We at Bjorn3D had just finished covering the launch of the GTX 200 GPUs and were turning our attention to AMD’s launch of its new GPUs when NVIDIA called us. They told us they had upgraded the 9800 GTX with faster clocks and a smaller core. They were calling it the 9800 GTX+ and it was poised to take on the competition’s newest GPU, the ATI 4850. They were going to send us a pair of these new cards and wanted to have the article go live on the 25th, the original launch day of the 4850. That’s when it dawned on us, ATI is back and they had NVIDIA shaking in their GPUs! Just a couple short days later we get news that AMD found out what NVIDIA was up to and pushed up the launch of the 4850 six days ahead of schedule. When NVIDIA got wind of this they lifted their embargo on the 9800 GTX+. Ahhhh, isn’t competition great!

Exciting plot lines and ethically dubious marketing aside, we at Bjorn3D can’t remember a time when the mid-range market was this exciting! Oh wait, it was 8-months ago when the 8800 GT showed up though without any real competition. Regardless of which side of the fence you are on it, is a great day for PC gaming everywhere. Not to mention the expansion of GPU computing into other areas like video transcoding and super-computing. Without further ado I present to you the newest card in NVIDIA’s arsenal in the on-going battle for the mid-range market, the 9800 GTX+. The plus means better!

SPECIFICATIONS

To help highlight some of the physical changes that took place with this new card we compiled this short list of specs.

| NVIDIA 9800 GTX+ GPU | |||

| NVIDIA 9800 GTX+ | NVIDIA 9800 GTX | ATi 4850 | |

| Fabrication Process | 55nm | 65nm | 55nm |

| Transistor Count | 754m | 754m | 965m |

| Core Clock Rate | 740 MHz | 675 MHz | 625 MHz |

| SP Clock Rate | 1,836 MHz | 1,675 MHz | – |

| Streaming Processors | 128 | 128 | 800 |

| Memory Type | GDDR3 | GDDR3 | GDDR3 |

| Memory Clock | 1,100 MHz (2,200 MHz) |

1,100 MHz (2,200 MHz) |

1000 MHz (2,000 MHz) |

| Memory Interface | 256-bit | 256-bit | 256-bit |

| Memory Bandwidth | 70.4 GB/s | 70.4 GB/s | 64.0 GB/s |

| Memory Size | 512 MB | 512 MB | 512 MB |

| ROPs | 16 | 16 | 16 |

| Texture Filtering Units | 64 | 64 | 40 |

| Texture Filtering Rate | 47.4 GigaTexels/sec | 43.2 GigaTexels/sec | 25.0 GigaTexels/sec |

| RAMDACs | 400 MHz | 400 MHz | 400 MHz |

| Bus Type | PCI-E 2.0 | PCI-E 2.0 | PCI-E 2.0 |

| Power Connectors | 2 x 6-pin | 2 x 6-pin | 1 x 6-pin |

The 9800 GTX+ is basically the same card as the 9800 GTX but with a smaller core size and higher core clocks. The memory remains the same speed as before as does the rest of the card. It will be interesting to see how much power this card uses with its smaller manufacturing process.

TEST SETUP

All tests were conducted on the following platforms. A format of the hard drives followed by a fresh install of the OS was performed. The latest drivers were then installed and all non-essential applications were halted.

| Test Platform | |

| Processor | Intel QX9650 @ 3.5GHz |

| Motherboard | XFX 790i Ultra |

| Memory | 2 GB (2 x 1 GB) Mushkin DDR3-2000 |

| Drive(s) | 1 – Seagate 80GB Barracuda SATA 1 – Samsung HD501LJ SATA |

| Graphics | Card 1 – NVIDIA 9800 GTX + (177.39) Card 2 – NVIDIA 9800 GTX + SLI (177.39) Card 3 – ATI 4850 (Catalyst 8.6) |

| Sound | On board |

| Cooling | Big Typhoon VX |

| Power Supply | OCZ GameXStream 850 watts |

| Display | Westinghouse 37″ LVM-37W3 |

| Case | No case |

| OS | Windows Vista Ultimate 32-bit |

Test Methods

All cards will be tested using a variety of games. For Crysis, we will be testing the game both without any image enhancements and then again with 2x Anti-Aliasing and 8x Anisotropic Filtering. All other games will be tested with 4x AA and 16x AF. The resolutions tested are 1280×1024, 1680×1050 and finally 1920×1080.

FRAPS is used to test both Call of Duty 4 as well as Oblivion. A manual run through of each game is performed three times before being averaged together. This will give us real world scores.

For comparison today we have the newly released ATI 4850. The 9800 GTX+ will be occupying the $229 price point which is a hair above what the 4850 will cost ($199). In addition, the previous 9800 GTX will now be reduced to $199 which is great news for everyone who didn’t buy a 9800 GTX within the last few weeks. Check back in a few days for our full evaluation of the ATI 4850 and a review on a retail sample of this mid-range powerhouse.

OVERCLOCKING

It is reasonable to assume that since the 9800 GTX+ was built with a smaller manufacturing process that it would allow for more overclocking headroom. Though since the card is already clocked higher that headroom may have been utilized. Enough theorizing here are our results.

Stock & Overclocked

We see that this new card even caught the makers of GPUz off guard as it still recognizes the card as its 65nm cousin. Regardless of the error in the info, take a look at those clocks speeds. That is simply awesome memory bandwidth gains thanks to the new Hynix memory chips employed by the 9800 GTX+. The Hynix chips are 0.8 ns chips rated for 1200 MHz (2400 MHz) operation. We see a nice bump in speed with the core over its already increased frequency thanks to the 55nm process. The best part of all of this is that it was done with the stock cooler. No exotic dry ice cooling here. Time to see what all this adds up to. Next page for test results please.

TEST RESULTS

We begin our testing today with the synthetic benchmarks. Remember while these scores are fun to compare they rarely translate into real world gaming performance. With that said here are our results.

3DMark2006

3DMark Vantage

For 3DMark Vantage we added an extra score into the mix here. With NVIDIA’s acquisition of Ageia and their PhysX technology, we will begin to see NVIDIA GPUs handling double duty. NVIDIA released a PhysX enabled Forceware driver with the 9800 GTX+ and coupled with the PhysX application driver we will see new levels of realism. 3DMark Vantage has an included test for PhysX enabled machines so it is important to mention all of this since it will result in an increased score as shown below.

In case you were wondering what happened with the SLI scores for the CPU you’re not alone. I tested and retested this configuration then I checked my setup and drivers. At this time it appears that the PhysX application driver does not work with SLI. Even so, the gain in CPU performance for single card setups is nothing short of astonishing and translates into a 1,050 total score increase in performance mode.

Call of Duty 4

We kick of our real world testing with Call of Duty 4 which has shown to have excellent scaling with dual card configurations.

Excellent results across the board with a near perfect scaling of SLI at higher resolutions away from the CPU bottleneck lower resolutions can have. In single card performance we see the new 4850 stay in step with the 9800 GTX+ at every resolution tested.

Company of Heroes v2.301

For Company of Heroes we have one graph showing the DirectX 9 performance followed by the DirectX 10 performance at the same resolution. This hopefully makes it easy to not only compare performance of the cards but to also show the hit to performance each card takes when moving to DirectX 10. It should also be noted that even though DirectX 10 unlocks a couple of additional settings we did not enable them. This way the only change being performed is the different rendering path used.

The NVIDIA 9800 GTX+ performs very well here despite the existence of a bug when running in DirectX 10 mode. The minimum FPS drops pretty severely and we see a %50 reduction in frame rate across the board for moving to DX10.

The Elder Scrolls IV – Oblivion v1.2.0416

We test Oblivion by using a manual run through and record our performance using FRAPS. I start outside the town of Cheydinhal on horse back and taking the same route each time, I travel to the Imperial City. The trip takes a full six mintues and encompass long view distances, water, forests, Oblivion gates and NPC’s. Being such a long trip, any changes from one run to the next (e.g. a wandering NPC) would have an effect equal to less than 1% on frame rate. This method gives me great insight into the real world performance of each card tested. All game settings we enabled and set to the maximum level.

Oblivion is not kind to SLI as we see very small gains to be had by using it. We include scores from Oblivion because even though it is an older game, it’s ability to stress even the newest cards continues.

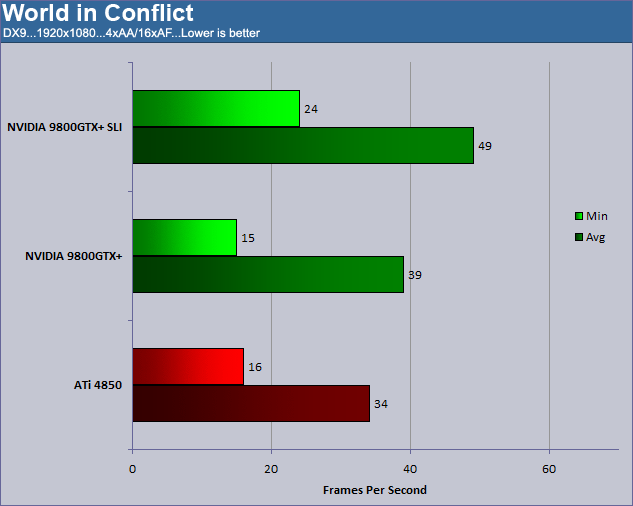

World in Conflict

World in Conflict is the only game where we enable the additional features that DirectX 10 allows us. We do this to show how stressful the new DX10 features can be to video cards. For each resolution tested every game setting was set to its maximum level.

We see the 9800 GTX+ and the ATI 4850 trade places with each successive test. SLI yields pretty nice gains as well.

Crysis v1.21

Crysis is the other games besides Company of Heroes that allows for additional features under DX10 that we do not enable. Again this is to show the impact of using only DX10 as opposed to DX9 under Windows Vista. The game was set to HIGH for both DX9 and DX10 testing and the scores shown are from tests conducted with AA and AF and also without AA and AF. They are listed as such.

The one thing that sticks out right away is the performance SLI gains from moving to DX10 when you do not use AA/AF. Everyone has their individual tastes and speaking only from my own opinion, I prefer to have a better looking game so AA/AF is a must. Having jaggies run across the edges of everything on the screen is a great way to kill the immersion factor faster then bad voice acting.

Once again we see the might of the redesigned 9800 GTX+ and the G92 core. The little core that could continues to show it value even as ATI introduces its new lineup of cards. I would be cautious though as the ATI card is so new and sure to have some additional performance squeezed from it through future driver updates.

TEST RESULTS

Power

Here is where we separate the men from the boys. Sure you’ve seen all the great performance this card has to offer but at what cost does it come? Will you need to refinance that home of yours to keep gaming for a few more months or will solar power be enough to keep you in the game. Power levels shown below are for the entire system sans monitor.

The 9800 GTX+ performs pretty well here thanks to its smaller core though ATI continues its dominance in this field. The 9800 GTX+ does not have the ability to underclock itself like the GTX 200 GPUs do which is why we see a idle levels higher than the 4850. As usual, SLI shows a big power draw. At least in a single card setup the amount of power being consumed is reasonable which translates into less heat and usually a quieter PC.

Temp

Now that we have an idea about what kind of power the NVIDIA 9800 GTX+ draws we can see just how effective its rather beefy heat sink and fans are.

Idle temps were taken after a 15 minute period of sitting at the desktop with no open windows. Load temps were recorded by using ATiTool’s 3D view to place a constant, heavy load on the card.

| Temperature | |

| Idle | Load |

| 60 | 70 |

Not bad. The temps are pretty tolerable and at a load temp of 70 º C you can be sure your card won’t melt half way through your favorite game. The idle temps are a bit high but the interesting part of the whole deal is how quiet the fan stayed during testing. It made no such noise as to disrupt the quiet room I test in. Even manually setting the fan all the way up to 60% kept the fan quiet enough to remain silent and yielded much better temps.

All in all the 9800 GTX+ takes what the 9800 GTX is and the 8800 GTX before that and improves upon it in every way. Let’s wrap it all up shall we?

CONCLUSION

As of today the 9800 GTX+ is not available for purchase so this was just a not-so-brief preview of its performance. The G92 core continues to live a healthy life long after most other cores would have been retired. It seems NVIDIA keeps finding new ways to implement it at different price points. Speaking of price points, NVIDIA plans to sell this card at $229 and has it positioned directly against ATI’s 4850. It is quite obvious that the 9800 GTX only exists due to the revitalized competition ATI has brought forth. For anyone who thinks NVIDIA, or any other company for that matter, would continue to innovate in the face of market dominance need only look to the GTX 280 GPU. A power hungry, expensive card that is only marginally faster than a card much less expensive in the form of the 9800 GX2. Regardless of anyone’s feeling on the matter it is great to have this level of competition for the mid-range enthusiast. Why? Because when there is this kind of product positioning and pricing going on the winner is the consumer.

The level of performance seen here today would easily have been out of reach for mid-range gamer just 12 months ago. What would have required a 8800 GTX card costing over $400 can now be had for less than $250 and we just love that. The 9800 GTX+ is a smaller, more power efficient version of the 9800 GTX with higher clock speeds and solid overclocking abilities. It is a well-priced, mid-range card which NVIDIA informs us should be available on or about the 16th of July. With cards like the 9800 GTX+, 2008 is shaping up to be the year of the mid-range gamer!

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996