Nvidia finally replaces the lower end 8 series cards with the GeForce 210. Today we see how the Gigabyte version of the card performs.

Introduction

Over two years have passed since Nvidia dived into the low end graphics card market. Today we have Nvidia’s latest entry, the G210. This card features a 64 bit bus, 512 MB of GDDR2 memory, and a 40nm die. The smaller die size means lower temperatures, which is great for low ventilation cases, which is probably where the card will go since it is a low profile card. So far this card is shaping up to be a great combo for a low power computer.

Today we have the Gigabyte version of the G210. It of course has all of the features of the reference G210, but Gigabyte has decided to make it their own to differentiate it from the crowd. This includes Gigabyte’s great software, and a gold plated HDMI port for a better signal. Lets jump right in and explore more of this card.

Features

|

Microsoft Windows 7 Microsoft Windows 7 is the next generation operating system that will mark a dramatic improvement in the way the OS takes advantage of the graphics processing unit (GPU) to provide a more compelling user experience. By taking advantage of the GPU for both graphics and computing, Windows 7 will not only make todays’s PCs more visual and more interactive but also ensure that they have the speed and responsiveness customers want. |

|

|

Windows Vista® Enjoy powerful graphics performance, improved stability, and an immersive HD gaming experience for Windows Vista. ATI Catalyst™ software is designed for quick setup of graphics, video, and multiple displays, and automatically configures optimal system settings for lifelike DirectX 10 gaming and the visually stunning Windows Aero™ user interface. |

|

|

RoHS Compliant As a citizen of the global village, GIGABYTE exert ourselves to be a pioneer in environment care. Give the whole of Earth a promise that our products do not contain any of the restricted substances in concentrations and applications banned by the RoHS Directive, and are capable of being worked on at the higher temperatures required for lead free solder. One Earth and GIGABYTE Cares! |

|

| OpenGL 3.1® Optimizations Ensure top-notch compatibility and performance for all OpenGL 3.1 application. |

||

| Microsoft® DirectX® 10.1 World’s first DirectX 10.1 GPU with full Shader Model 4.0 support delivers unparalleled levels of graphics realism and film-quality effects. |

||

| Shader Model 4.1 Shader Model 4.1 adds support for indexed temporaries which can be quite useful for certain tasks.Regular direct temporary access is preferable is most cases. One reason is that indexed temporaries are hard to optimize. The shader optimizer may not be able to identify optimizations across indexed accesses that could otherwise have been detected. Furthermore, indexed temporaries tend to increase register pressure a lot. An ordinary shader that contains for instance a few dozen variables will seldom consume a few dozen temporaries in the end but is likely to be optimized down to a handful depending on what the shader does. This is because the shader optimizer can easily track all variables and reuse registers. This is typically not possible for indexed temporaries, thus the register pressure of the shader may increase dramatically. This could be detrimental to performance as it reduces the hardware’s ability to hide latencies. |

||

| PCI-E 2.0 PCI Express® 2.0 –Now you are ready for the most demanding graphics applications thanks to PCI Express® 2.0 support, which allows up to twice the throughput of current PCI Express® cards. Doubles the bus standard’s bandwidth from 2.5 Gbit/s (PCIe 1.1) to 5 Gbit/sec. |

||

| GigaThread™ Technology Massively multi-threaded architecture supports thousands of independent simultaneous threads, providing extreme processing efficiency in advanced, next generation shader programs. |

||

| HDCP Support High-Bandwidth Digital Content Protection (HDCP) is a form of copy protection technology designed to prevent transmission of non-encrypted high-definition content as it travels across DVI or HDMI digital connections. |

||

| HDMI Ready High Definition Multimedia Interface (HDMI) is a new interface standard for consumer electronics devices that combines HDCP-protected digital video and audio into a single, consumer-friendly connector. |

||

|

CUDA Technology NVIDIA® CUDA™ technology unlocks the power of the hundreds of cores in your NVIDIA® GeForce® graphics processor (GPU) to accelerate some of the most performance hungry computing applications. The CUDA™ technology already adopted by thousands of programers to speed up those performance hungry computing applications. |

|

The features are pretty standard on Nvidia cards these days. It is nice to see Nvidia didn’t but out any of them.

Specifications

| Specification | G210 | GT220 | 4670 |

|---|---|---|---|

| Core Clock (MHz) | 650 | 720 | 750 |

| Shader Clock |

1547 |

1566 | 750 |

| Memory Size (MB) |

512 |

1024 | 512 |

| Memory Bus (bit) |

64 | 128 | 128 |

| Memory Clock (MHz) |

800 |

1600 | 2000 |

| Memory Type |

GDDR2 |

DDR3 | GDDR3 |

On paper the GT220 is pretty close to the 4670, but that is just paper. The G210 definitely looks to be very bottlenecked with its 64 bit bus and GDDR2.

Pictures & Impressions

We can see here that Gigabyte has included the octopus like thing on the box. Gigabyte clearly points out some of the key features of the card. Most prevalent is the gold plated HDMI port. Gigabyte also makes sure that you know this card is 100% compatible with Windows 7 and Windows Vista. Even though most cards are, this card has DirectX 10, so you will be able to get the whole experience.

Gigabyte has chosen to pack in the bare essentials. This is most likely due to Gigabyte making an effort to make the card cheaper. Gigabyte has packed the package to the brim. Unfortunately the graphics card has no covering besides the anti-static bag. The card will most likely be fine, but we like to see a bit of padding.

Gigabyte has packed the bare essentials to get you started. If you choose to use the card in a low profile case, then you will have to use the included back plate for the graphics card. Gigabyte has included a manual to help out people new to computers, and a driver disk with their software.

The card itself looks pretty plain. It is nice to see a fan. This should ensure that the card will run cooler than any card without one. If you choose to use a low profile case, then the VGA connection is rendered useless. The card should have no issues fitting in a small case, with it being barely longer than the physical PCIE slot.

You can see here that Gigabyte has decided to include a variety of different connectors. You should easily be able to find one compatible with your TV or computer monitor. The HDMI is especially useful because this card could easily be used on an HTPC.

Metholdology

To test this card, we did a fresh load of Vista 64 bit and applied all the patches and updates for the OS, then we updated all the motherboard drivers and made sure that we had the latest 9.11 Catalyst and 190.62 Forceware drivers. We didn’t install any video drivers on the test rig at first, we just installed the basics and then cloned the hard drive using Acronis. That way when we switch from the ATI GPU to the Nvidia GPU we can have a fresh load with no old drivers hanging around to bugger up our benchmark numbers.

We ran each test 3 times and averaged the results, the average of those results are reported here. The one exception to the 3 run rule is Stalker, this test is just so long so all of the individual tests where averaged together. Below is a detailed list of the components used during testing.

| Test Rig | |

| Case | Cooler Master HAF 932 |

| CPU | Intel i7 920 @ 3.7 Ghz |

| Motherboard | Intel DX58SO |

| Ram | (3x2GB) DDR3 @ 1482 8-8-8-20 |

| CPU Cooler | D-Tek Fuzion |

| Hard Drives | Corsair P64 Western Digital 750 GB |

| Optical | LiteOn DVDR |

| GPU’s Tested |

MSI HD 4670 |

| Testing PSU | Corsair HX1000 Watt |

Synthetic Benchmarks & Games

| Synthetic Benchmarks & Games | |

| 3DMark Vantage | |

| 3DMark 06 | |

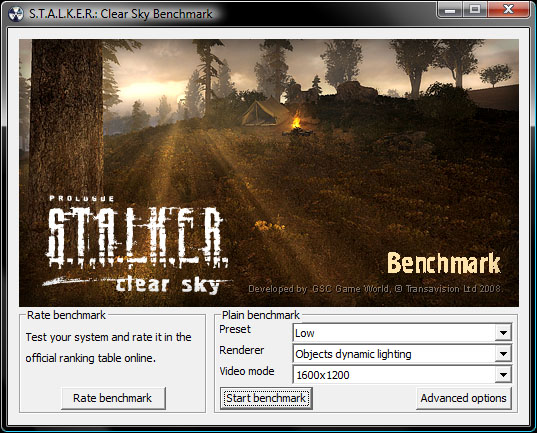

| Stalker Clear Sky Stand Alone Benchmark | |

| Crysis | |

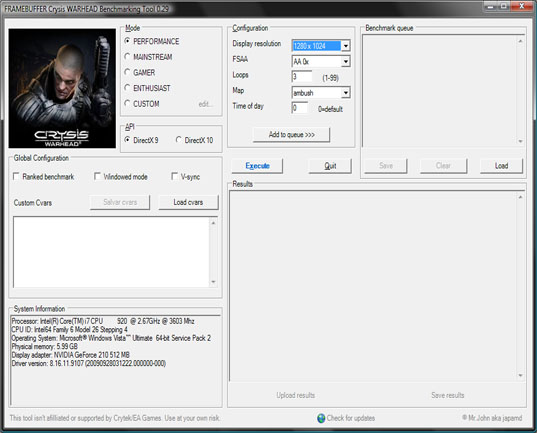

| Crysis Warhead | |

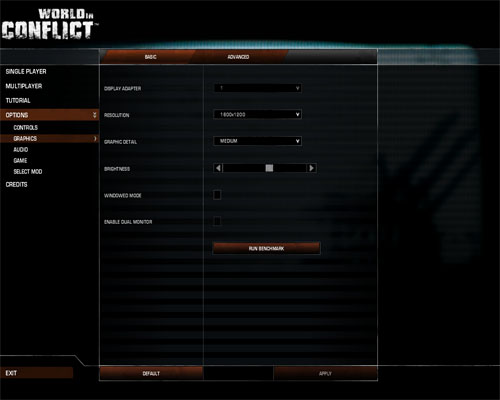

| World In Conflict | |

| H.A.W.K.S. | |

| Far Cry 2 | |

Overclocking

To overclock the Gigabyte G210 I used Evga Precision. I slowly increased the clocks by 10 MHz until the system became unstable, then I backed down the clocks a bit and tested for stability. I kept lowering the clocks until the system was rock solid. Below where my results.

| Core Clock | Shader Clock | Memory Clock |

|---|---|---|

| 700 |

1666 |

525 |

The card itself did not overclock all that well. After just a tiny bump in speed, the card began to artifact, and quickly froze up. Coming into the review, I did not expect a huge overclock from this card because of the target market. If you are looking for more performance then you are much better off grabbing a GT220 or higher.

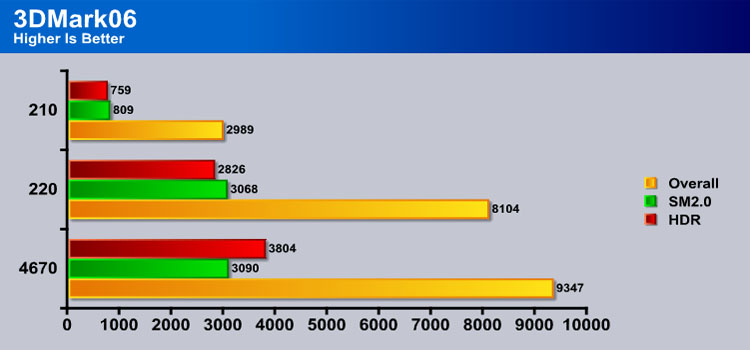

3DMARK06 V. 1.1.0

3DMark06 developed by Futuremark, is a synthetic benchmark used for universal testing of all graphics solutions. 3DMark06 features HDR rendering, complex HDR post processing, dynamic soft shadows for all objects, water shader with HDR refraction, HDR reflection, depth fog and Gerstner wave functions, realistic sky model with cloud blending, and approximately 5.4 million triangles and 8.8 million vertices; to name just a few. The measurement unit “3DMark” is intended to give a normalized mean for comparing different GPU/VPUs. It has been accepted as both a standard and a mandatory benchmark throughout the gaming world for measuring performance.

The G210 is certainly the loser here. The cards HDR and SM2.0 score where no where near the other cards. The only thing that saved it from a down right terrible score was the CPU.

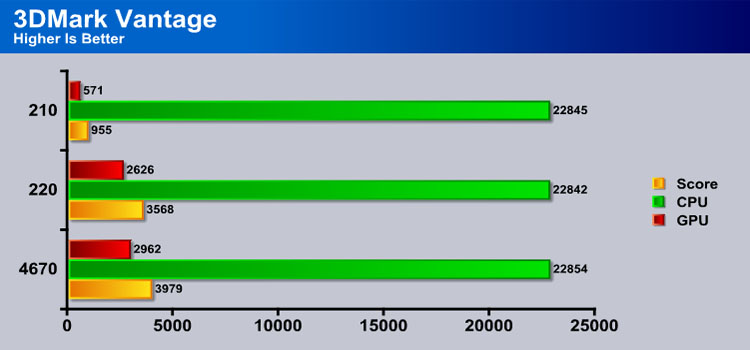

3DMark Vantage

For complete information on 3DMark Vantage Please follow this Link:

www.futuremark.com/benchmarks/3dmarkvantage/features/

The newest video benchmark from the gang at Futuremark. This utility is still a synthetic benchmark, but one that more closely reflects real world gaming performance. While it is not a perfect replacement for actual game benchmarks, it has its uses. We tested our cards at the ‘Performance’ setting.

Currently, there is a lot of controversy surrounding NVIDIA’s use of a PhysX driver for its 9800 GTX and GTX 200 series cards, thereby putting the ATI brand at a disadvantage. Whereby installing the PyhsX driver, 3DMark Vantage uses the GPU to perform PhysX calculations during a CPU test, and this is where things get a bit gray. If you look at the Driver Approval Policy for 3DMark Vantage it states; “Based on the specification and design of the CPU tests, GPU make, type or driver version may not have a significant effect on the results of either of the CPU tests as indicated in Section 7.3 of the 3DMark Vantage specification and white paper.” Did NVIDIA cheat by having the GPU handle the PhysX calculations or are they perfectly within their right since they own Ageia and all their IP? I think this point will quickly become moot once Futuremark releases an update to the test.

Again the G210 is the clear loser. It still has terrible scores, and is no where near the GT220. This may turn into a long review.

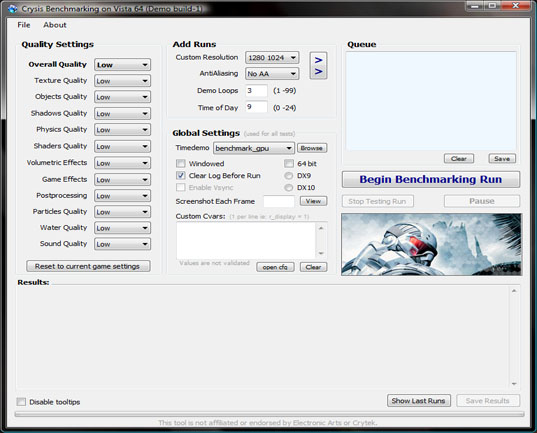

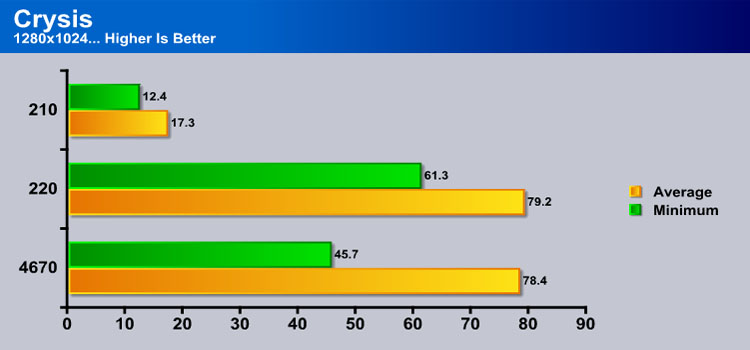

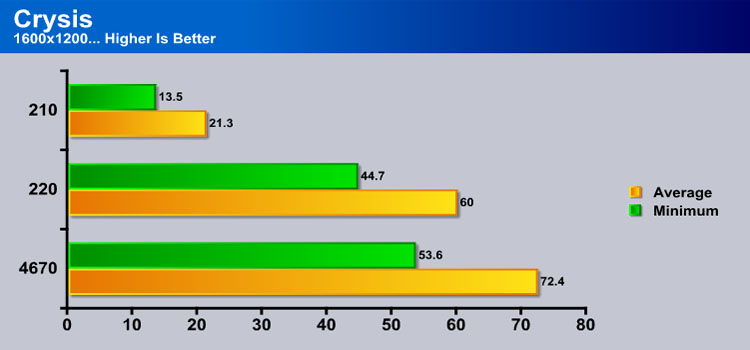

Crysis v. 1.21

Crysis is the most highly anticipated game to hit the market in the last several years. Crysis is based on the CryENGINE™ 2 developed by Crytek. The CryENGINE™ 2 offers real time editing, bump mapping, dynamic lights, network system, integrated physics system, shaders, shadows, and a dynamic music system, just to name a few of the state-of-the-art features that are incorporated into Crysis. As one might expect with this number of features, the game is extremely demanding of system resources, especially the GPU. We expect Crysis to be a primary gaming benchmark for many years to come.

The Settings we use for benchmarking Crysis

When we move into real world testing we see that the G210 doesn’t do any better. The card is still lagging far behind the GT220.

The resolution increase only worsens the end result for the G210. It also hurts the GT220, as it drops behind the 4670.

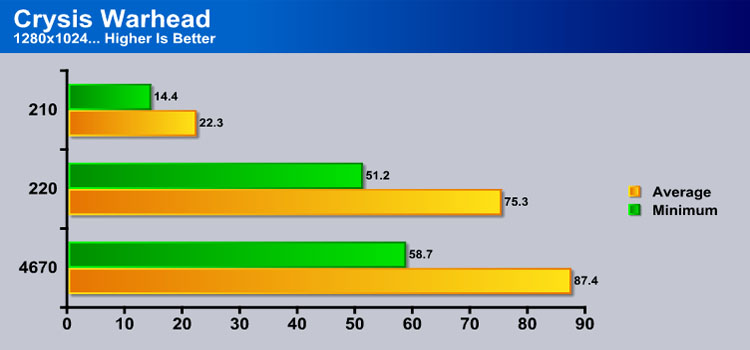

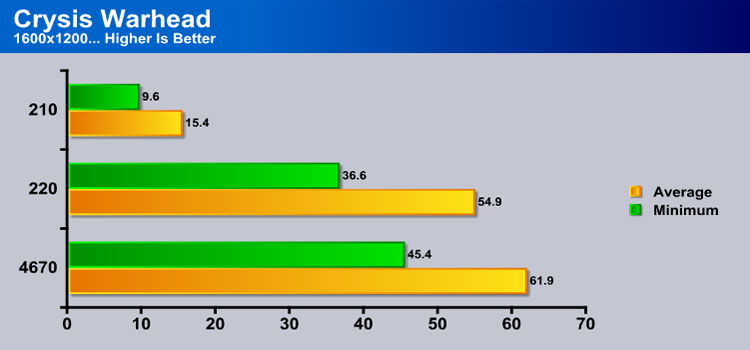

CRYSIS WARHEAD

Crysis Warhead is the much anticipated sequel of Crysis, featuring an updated CryENGINE™ 2 with better optimization. It was one of the most anticipated titles of 2008.

Crysis Warhead is more optimized, but as you can see the G210 is still far from being able to play the game despite low settings being used.

The resolution increase only makes the card look worse. This card is not looking like a gamer friendly card.

Far Cry 2

Far Cry 2, released in October 2008 by Ubisoft, was one of the most anticipated titles of the year. It’s an engaging state-of-the-art First Person Shooter set in an un-named African country. Caught between two rival factions, you’re sent to take out “The Jackal”. Far Cry2 ships with a full featured benchmark utility and it is one of the most well designed, well thought out game benchmarks we’ve ever seen. One big difference between this benchmark and others is that it leaves the game’s AI (Artificial Intelligence) running while the benchmark is being performed.

The Settings we use for benchmarking FarCry 2

Far Cry 2 does not treat the G210 any better. It also increases the gap between the GT220 and the 4670.

The G210 once again comes up extremely short. The results are pretty sad considering the settings in Far Cry where the absolute minimum.

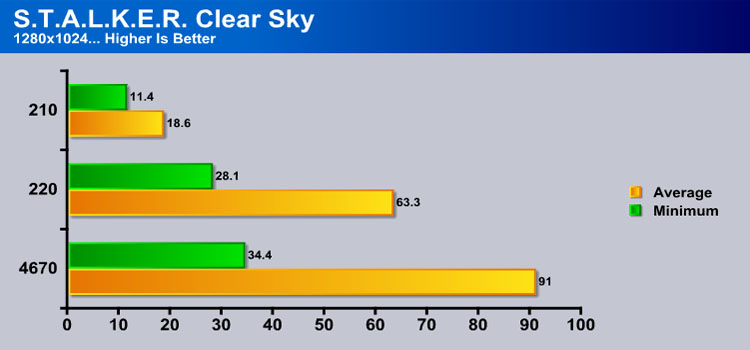

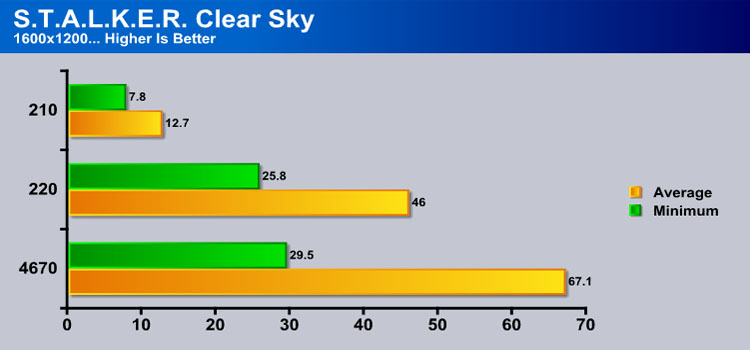

S.T.A.L.K.E.R. Clear Sky

S.T.A.L.K.E.R. Clear Sky is the latest game from the Ukrainian developer, GSC Game World. The game is a prologue to the award winning S.T.A.L.K.E.R. Shadow of Chernobyl, and expands on the idea of a thinking man’s shooter. There are many ways you can accomplish your mission, but each requires a meticulous plan, and some thinking on your feet if that plan makes a turn for the worst. S.T.A.L.K.E.R. is a game that will challenge you with intelligent AI, and reward you for beating those challenges. Recently GSC Game World has made an automatic tester for the game, making it easier than ever to obtain an accurate benchmark of Clear Sky’s performance.

The G210 comes up short here as well. As before, the card can not play the game at a smooth frame rate despite the extremely low settings.

The G210 once again lowers its performance. Up to this point, the G210 has been showing that it can not play any recent games, even with low settings, which is a bit of a disappointment.

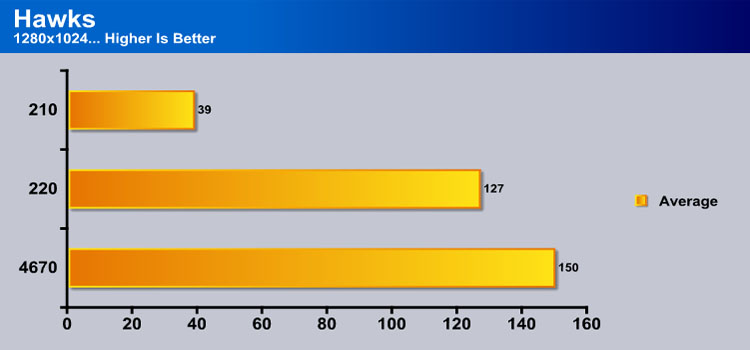

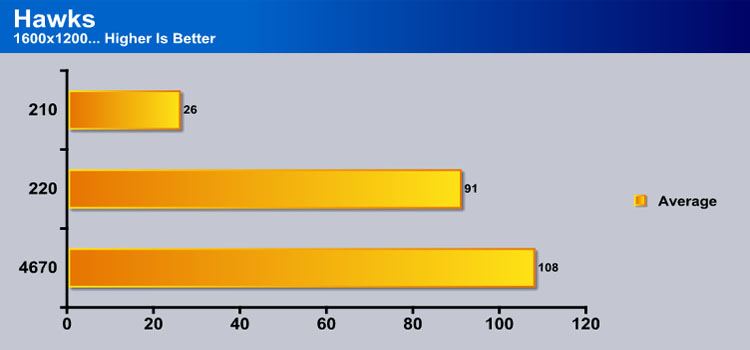

HawX

The story begins in the year 2012. As the era of the nation–state draws quickly to a close, the rules of warfare evolve even more rapidly. More and more nations become increasingly dependent on private military companies (PMCs), elite mercenaries with a lax view of the law. The Reykjavik Accords further legitimize their existence by authorizing their right to serve in every aspect of military operations. While the benefits of such PMCs are apparent, growing concerns surrounding giving them too much power begin to mount.

Tom Clancy‘s HAWX is the first air combat game set in the world–renowned Tom Clancy‘s video game universe. Cutting–edge technology, devastating firepower, and intense dogfights bestow this new title a deserving place in the prestigious Tom Clancy franchise. Soon, flying at Mach 3 becomes a right, not a privilege.

Finally a game that the G210 can actually play. While it may be lacking behind the competition, it is still able to obtain a respectable average of 39 FPS.

When the resolution is increased we see that the G210 falls a bit, but is almost in the playable FPS range.

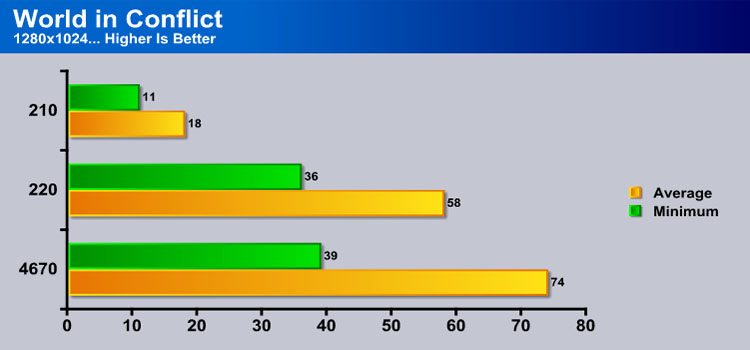

World in Conflict Demo

World in Conflict is a real-time tactical video game developed by the Swedish video game company Massive Entertainment, and published by Sierra Entertainment for Windows PC. The game was released in September of 2007. The game is set in 1989 during the social, political, and economic collapse of the Soviet Union. However, the title postulates an alternate history scenario where the Soviet Union pursued a course of war to remain in power. World in Conflict has superb graphics, is extremely GPU intensive, and has built-in benchmarks. Sounds like benchmark material to us!

Here we are in the same hole we were in before. The G210 is once again unable to reach a playable FPS range.

When the resolution is increased, the card looses a little bit of FPS, but not a whole lot. This has certainly been a long ride.

Temperatures

The Gigabyte G210 certainly looked like it was going to run cool with its fan, and in the end it ran very cool. I let the fan run at auto speed, which usually gives the sweet spot between noise and performance. Throughout testing the fan was very hard to hear over my case fans. To test the load temperature I loaded up Furmark 1.7, and let it run for 30 minutes. I then recorded the maximum temperature reached. To test the idle temperature I let the card idle with no programs running. Below is a table of the results.

| Idle Temperature | Load Temperature |

|---|---|

| 36 | 54 |

Take in mind that these temperatures where obtained in a full tower case with a fan blowing directly on the graphics card. If you are using the card in a low profile case, then your temperatures may be higher.

Conclusion

The Gigabyte G210 certainly does not try to be what its not, a gaming card. The card itself has its benefits for an HTPC. This includes the HDMI port, and the ability to take some of the load away from the CPU when watching video. This will help if your HTPC is running a low end CPU and you plan on watching high definition content. The card itself can potentially be used to play games, but the games will have to be older games because this card just does not have the power to play the latest games.

Now it really would be wrong to consider this card a gaming card, but it is competing with the 9400 GT, which has much more memory bandwidth. The 9400 GT also happens to fall in the same price bracket. This really puts a damper on this card, since the G210 does not add much more than the 9400 GT does. This makes the card hard to recommend.

We are using this scale with our scoring system to provide additional feedback beyond a flat score. Please note that the final score isn’t an aggregate average of the rating system.

- Performance 6

- Value 6

- Quality 8

- Warranty 8

- Features 7

- Innovation 6

Pros:

+ Good Cooler

+ Low Profile

Cons:

– Performance Is Low

The Gigabyte G210 is an ok card, but in the end it is a bit overpriced, and inferior to the 9400 GT. This is why the card received a 6 out of 10.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Nice job,post another about geforce 210 benchamrk