The AMD HD6950 and HD6970 are the update to the popular HD5840 and the HD5870. Can AMD repeat the success with these two cards?

INTRODUCTION

A few months ago AMD created quite the confusion when they released the HD6870 and HD6850, two GPU’s which, contrary to their names, did not replace the HD5870 and HD5850. The reason for the name change was that AMD wanted to rearrange their naming scheme and use HD69x0 for the enthusiast/high-end GPU’s like HD6990, HD6970 and HD6950, while leaving HD68x0 for the performance cards.

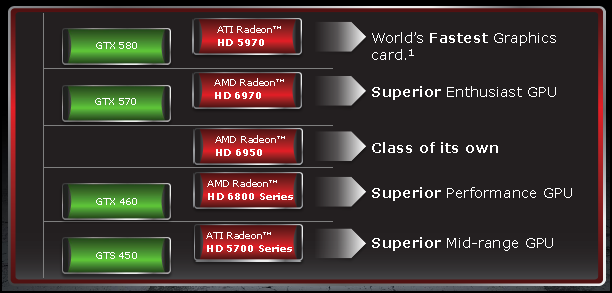

Today AMD finally has released two of the HD69x0 cards: the HD6970 and the HD6950, both replacement for the HD5870 and HD5850 respectively. The following image illustrates how AMD sees these two GPU’s:

As we can see, the HD6970 goes up against the GTX570 while the HD6950 is positioned somewhere between the GTX460 and the GTX570. This is also reflected by the price. The recommended price for the HD6970 is $369-399 depending on configuration (€280-300) and $299-329 depending on the configuration for the HD6950 (€230-$260). This places the HD6970 just above the GTX 570 while the HD6950 sits just below the GTX570. It does however seem as though the first card on the street will cost a premium. For example, the Sapphire HD6950 right now seems to cost around $300 while the HD6970 costs around $370.

But enough of prices. Let’s look what makes these GPU’s tick.

THE SPECIFICATIONS

| GPU | Radeon HD 6950 | Radeon HD 6970 | GeForce GTX 470 | GeForce GTX 480 | GeForce GTX 570 | GeForce GTX 580 |

|---|---|---|---|---|---|---|

| Die Size | 289 | 389 | 529 | 529 | 520 | 520 |

| Shader units | 1408 | 1536 | 448 | 480 | 480 | 512 |

| Texture Units | 88 | 96 | 56 | 60 | 60 | 64 |

| ROPs | 32 | 32 | 40 | 48 | 40 | 48 |

| GPU | Cayman | Cayman | GF100 | GF100 | GF110 | GF110 |

| Transistors | 2640M | 2640M | 3000M | 3000M | 3000M | 3000M |

| Memory Size | 2048MB | 2048MB | 1280MB | 1536MB | 1280MB | 1536MB |

| Memory Bus Width | 256 bit | 256 bit | 320 bit | 384 bit | 320 bit | 384 bit |

| Core Clock | 800 MHz | 880 MHz | 607 MHz | 700 MHz | 732 MHz | 772 MHz |

| Memory Clock | 1250 MHz | 1375 MHz | 1,215 MHz | 1,401 MHz | 1,464 MHz | 1,544 MHz |

| Price | $300 | $369.99 | $279 | $449 | $349 | $509 |

The HD6970 and HD6950 use the Cayman GPU. They are both built with the 40nm process, and the main difference between the two is that the HD6970 has slightly more stream processors and texture units than the HD6950, and it is also clocked slightly higher.

While the GPU in the HD6850 and HD6870 was a slight optimization of the previous GPU’s, AMD has made some bigger changes with the HD69x0 GPU’s.

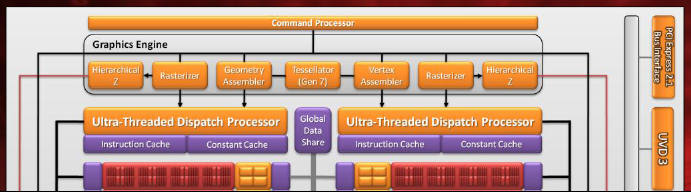

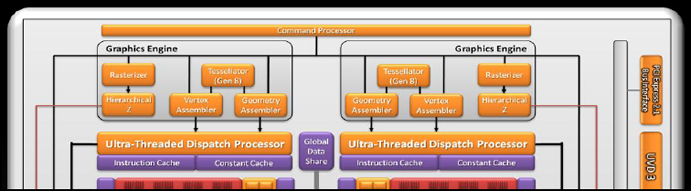

At first it is easy to think that the core looks the same as on the HD6800 but looking closer we see there are some differences.

AMD HD6800:

AMD HD6900:

AMD calls this a “dual graphics engine,” and it is easy to see this as some sort of a multi-core GPU. Each graphics engine has its own tessellator, geometry assembler and vertex assembler.

The biggest change though is that the core has been redesigned so that it now has a VLIW4 design instead of the previous VLIW5 design. Let’s see what AMD says about the changes.

The VLIW5 design used four simple SIMD units and one complex t-unit (transcendental unit) in order to build a stream processing unit, while the VLIW4 configuration uses four stream units which feature equal capabilities, two of them being assigned with special functions. The important thing for end-users is that AMD claims it can provide a bit more (10%) performance/mm2.

As the slides show, there’s been a few other updates to the GPU.

CLOSER LOOK

The reference cards look just like the HD6870 and HD6850. The black and red is quite nice looking, and everything is cooled by a vapor chamber cooling with a nice quiet fan.

One interesting feature of these cards is that they actually have two BIOS chips installed. By using a switch users can switch between BIOS 1 and 2. One of them cannot be overwritten, providing a failsafe chip.

Just as on the HD6850 and HD6870, this card comes with 2 mini-DisplayPorts, one HDMI-port and two DVI-ports (one single-link and the other dual-link).

FEATURES – EQAA

Overall, the HD6970 and HD6950 have the same features as the HD6850 and HD6870, which we wrote about in our analysis. It includes HDMI 1.4, DisplayPort 1.2, better geometry performance and new anti-aliasing modes like Morphological Anti-Aliasing.

There are a few new features compared to the HD68x0-GPU’s though.

Enhanced Quality Anti-Aliasing

EQAA is a set of new modes that can offer up to 16 coverage samples per pixel. It offers custom sample patterns and filters, and is compatible with Adaptive AA, Super-Sample AA and Morphological AA.

AMD claims that users won’t loose much performance, if any, by using this mode and that it will improve image quality. We plan on following up this with in an article at a later date, going through all the various image quality settings to see what they do. In light of Nvidia’s latest rant about AMD and image quality it should be interesting.

As with all IQ settings, users can decide what they want to enable or disable in the Catalyst Control Center.

FEATURES – POWERTUNE

One of the new features included is PowerTune, which to some extent, is an evolution of the PowerPlay feature on older GPU’s, in which the GPU would have different power states depending on the overall TDP. Traditionally it has meant that when the GPU is at the highest state, the clockspeed and voltage are fixed.

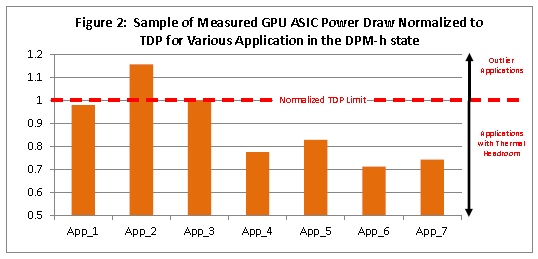

When a graphics card is built AMD will give the system builders a TDP value (Thermal Design Power), indicating the maximum power draw for reliable operation. This figure is drawn from a “worst-case scenario” where the clockspeed and voltage is maxed out, and the GPU is loaded with a 100% workload for several minutes.

Since the TDP-figure is derived from a “worst-case-cenario” it means that there are situations where the system might hit the TDP-limit even though the GPU isn’t running at full clockspeed. It also means that there are a lot of applications that have lots of headroom up to the TDP limit.

The Catalyst Control Center allows us to set PowerTune at -20%, -10%, 0, +10% and +%20. So what does that mean? Well, we’ve had some long discussions on the site how PowerTune actually works as we feel it can be quite confusing to understand.

Our interpretation is that when PowerTune is set to 0, the TDP is 250W for the HD6970. If the card is fully loaded and draws more than 250W, the clockspeed and voltage are decreased to keep the card in check with TDP. This is something we see when we run FurMark.

GPU core clock is fluctuating on the HD6950 when running FurMark with PowerTune set to 0.

If we then set PowerTune to +10% or +20%, we effectively increase TDP from 250W to 275W or 300W. When we run FurMark with PowerTune set at 10%, we notice that the clockspeed no longer is reduced. In our case it means that the card no longer hits the TDP limit and thus there is no need to reduce the clockspeed any more.

So how does that affect us? In the past, AMD had to ship cards with a clockspeed that they knew would not break the TDP limit, even when users were running “worst-case” applications like FurMark. With PowerTune, they can now have a higher clockspeed on their cards, knowing that in the very few instances that an application will load the card so much that it reaches TDP, it will be down-throttled so it stays within the power envelope. In applications and games that do not approach the TDP limit, we will be able to take advantage of the higher clockspeed. In effect, this is a sort of factory overclocking. Being able to increase the TDP limit also should mean we have a bit more headroom for proper overclocking, as we do not risk pushing the card to its TDP limit and getting down-throttled.

If we want to keep the power consumption to a minimum we can also set PowerTune to -10% or -20%, which would downclock the GPU and memory, as the TDP limit would be decreased. This would be of more use in a laptop chip than in a desktop.

PERFORMANCE

We tested the card on the following system:

- AMD Phenom II X6 1090T @ 3.2 GHz

- Noctua NH-C12 Cooler

- 4 GB OCZ Black Edition DDR3 @ 1600 MHz

- ASUS Crosshair IV Formula

- 300 GB WD Raptor (System Disk)

- 1 TB Samsung F2 (Storage Disk)

- Thermaltake 1200W PSU

- Dell 24” monitor with a maximum resolution of 1920×1200

- Windows 7 Pro 64-bit

Unfortunately, we ran into an issue with the HD6970. The performance of the card was far below those of the HD6950 and HD6850, and nothing we did fixed it. Due to inexplicable erroneous results across the board with this card, we have discounted its scores from our results section. We hope to be able to get hold of a new card shortly. We also could not get hold of a GTX570 in time for the article, as they are in very short supply at the moment. We hope to rectify that problem soon to. Our review of the Sapphire HD6950 does include some GTX570 and GTX580 scores.

3DMark 11

The latest version of 3DMark was released just a few weeks ago, and is sure to stress today’s cards to the limit. More information about the benchmark can be found at http://www.futuremark.com.

The HD6950 easily keeps all the other cards behind it. It also scales well in CrossFireX.

As we turn up the settings (this test is run at 1920×1080), the performance of all cards drop, but the HD6950 still is the best of the pack. Again it scales well in CrossFireX.

3DMark Vantage

Since 3DMark Vantage tests the DX10 performance (still used in some games), and 3DMark 11 tests DX11 performance, both are still relatively relevant for us to use. Again, the HD6950 performs very well compared to the other cards.

Unigine heaven 2.1

Unigine Heaven is a benchmark program based on Unigine Corp’s latest engine, Unigine. The engine features DirectX 11, hardware tessellation, DirectCompute, and Shader Model 5.0. All of these new technologies, combined with the ability to run each card through the same exact test, means that this benchmark is an essential component of our arsenal.

Settings used:

* Shaders: high

* Tessellation: normal

* Anti-aliasing: 4x

* Anisotropy: 16x.

.png)

The HD6950 is a big improvement over the HD5870 and HD5850. The improved tessellation engine should be partly to thank for the improved performance. Again we see an impressive scaling when putting two HD6950 in CrossFireX. This hints at the potential performance of the HD6990.

CRYSIS: WARHEAD

Crysis Warhead is the much anticipated standalone expansion pack to Crysis, featuring an updated CryENGINE™ 2 with better optimization. It was one of the most anticipated titles of 2008.

Settings: “Gamer”, DX10, 4xAA

It is a bit interesting to see that the Nvidia cards can get up to 61 FPS, while the AMD cards seem to be stuck around 57 FPS. Even a single HD6950 gets almost CPU-limited up to 1920×1200.

Just Cause 2

Settings: Text Det: High , Shadow Qual: High , 4xAA , 16xAF , Water: Very High , Objects: Very High , Decals: On

Soft Particles: On , V-Sync: Off , Hig-res shadows: On , SSAO: High , Point Light Spec: On

As we move up in resolution, the HD6950 manages to hold off the other cards, including the HD5870. There isn’t a huge difference between them, but considering that the HD 6950 is actually replacing the HD5870, the result is not bad. Once again, we see great scaling when we put two HD6950s in CrossFire.

In this demo the HD6950 actually loses to the HD5870 at 1920×1200, although the margin is very small.

Alien versus Predator

This benchmark is based on the Rebellion game Alien versus Predator, released in 2010. It uses DirectX11 and DX11 features used include tessellation.

In a game that uses more DX11 features (like tessellation), we see that the HD6950 again easily manages to keep the other cards behind including the HD5870. Again, we are impressed by the great scaling we see in CrossFireX.

CONCLUSION

It is unfortunate that we could not test the HD6970 or a GTX570 in this article. However, we know from other reviews that the GTX570 is a bit faster than the HD6950. This should also mean the HD6970 might be faster or as fast as the GTX570, something we hope to verify soon.

The HD6950 and HD6970 are great updates to the HD5850 and the HD5870. The redesigned core gives us a nice improvement in tessellation performance and we actually really like the idea of PowerTune, as it gives users a lot more control over how we want our cards to perform. We are also very impressed with the CrossFire-scaling of the HD6950. Coupled with the improved Eyefinity, 7.1-audio support, HDMI 1.4 and DisplayPort 1.2, these cards that easily should work well for the next year or so.

When the HD6850 and HD6870 were released, vendors used the fact that users were waiting for new AMD cards to price them above what AMD had originally intented. This time it looks like the prices are much more in-line with AMD’s SEP. At $300, the HD6950 still is a tad expensive, as the GTX570 costs around $30 more. For those who value the features of AMD’s card, though, it is still a good price. The HD6970, on the other hand, costs around $370, which makes us wonder if it might even be more value to spend a little extra and get a HD6970 instead of the HD6950. They both seem to offer good value.

We hope to bring you more benchmarks soon, including results for the HD6970 and the GTX570 as well as more retail card reviews. We also have a review of the retail HD6950: the Sapphire HD6950.

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996

Bjorn3D.com Bjorn3d.com – Satisfying Your Daily Tech Cravings Since 1996